When a medical device assessment misses real clinical risk

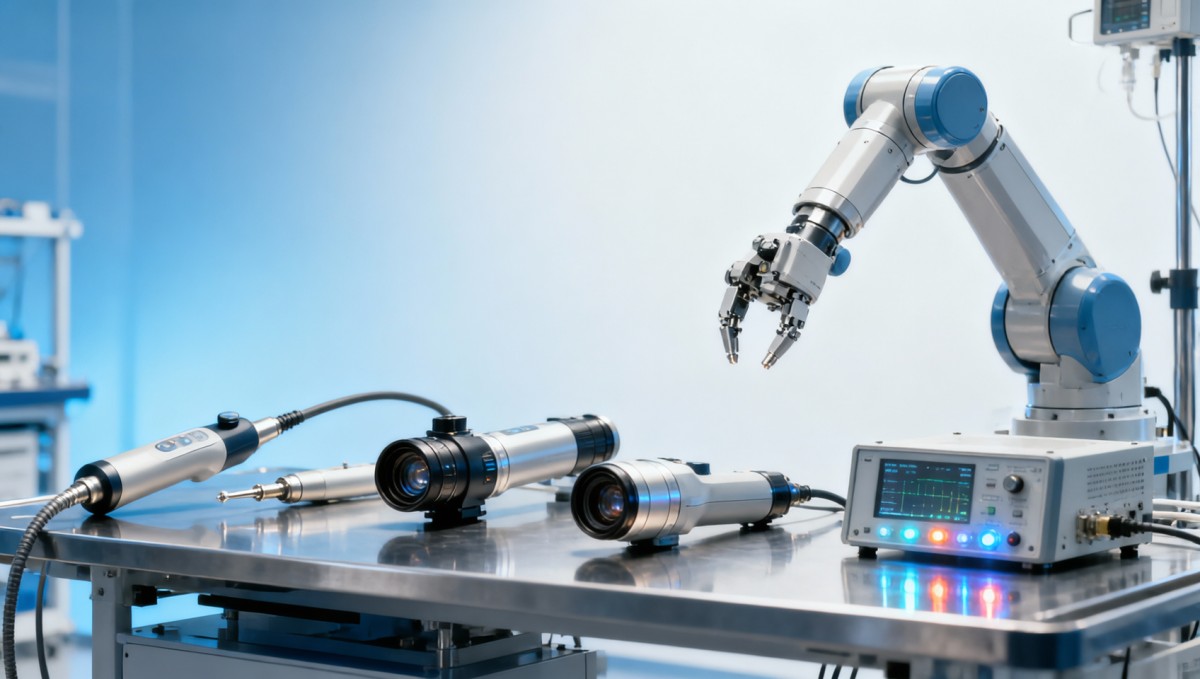

When a medical device assessment overlooks real clinical risk, procurement teams may approve products that meet medical device certification on paper but fail under real-world conditions. From Ultrasound Metrics and surgical robot latency test results to endoscope image resolution benchmark data, healthcare compliance depends on evidence, not claims. This article explores how MDR certification, hospital equipment standards, and medical equipment standards should be examined to protect performance, safety, and long-term value.

For researchers, operators, procurement teams, and executive decision-makers, the problem is rarely a lack of product brochures. The real issue is whether a device can sustain performance after 6 months of daily use, remain stable across temperature and humidity changes, and produce clinically reliable output under actual workflow pressure. In value-based procurement, a device that passes basic documentation review but fails in practice can increase service costs, delay treatment, and create avoidable safety exposure.

This is where independent benchmarking matters. VitalSync Metrics (VSM) focuses on turning engineering parameters into decision-grade evidence, helping buyers compare technical integrity, compliance readiness, and long-term reliability before contracts are signed. In a market where one weak assessment criterion can distort a multimillion-dollar sourcing decision, clinical risk must be tested, not assumed.

Why certification alone does not capture real clinical risk

A medical device may hold required documentation and still underperform in the field. Regulatory pathways such as MDR or IVDR are critical, but they do not replace detailed performance benchmarking under realistic operating conditions. A procurement file can look complete while still missing latency variability, image degradation after repeated use, sensor drift beyond acceptable tolerance, or material fatigue after thousands of cycles.

In many hospitals, device reviews are completed in 2 to 6 weeks, yet the products being evaluated may remain in service for 5 to 10 years. That mismatch creates risk. A short review window often prioritizes certifications, declarations of conformity, and headline specifications, while downplaying stress testing, operator workflow fit, calibration stability, and maintenance burden. The result is a paper-compliant device with uncertain clinical durability.

For example, an imaging system may meet stated resolution requirements in a controlled factory environment, but image quality can decline when ambient temperature shifts by 8°C to 12°C, or when repeated cleaning cycles affect optical components. A wearable sensor may show acceptable sensitivity at launch, yet signal-to-noise ratio can worsen after 30 to 90 days of continuous wear-related strain. These are not marketing issues; they are operational and clinical risks.

Common gaps in standard device assessments

The most frequent weakness in medical device assessment is the assumption that compliance equals readiness. In reality, compliance confirms a threshold, while procurement decisions require deeper evidence about repeatability, tolerance, serviceability, and failure mode behavior. A buying team should separate baseline legal conformity from actual clinical suitability.

- Single-point performance checks instead of repeated-cycle validation over 100, 500, or 1,000 use events.

- No verification of output consistency across multiple operators, shifts, or hospital departments.

- Limited analysis of maintenance intervals, spare part dependency, or calibration drift over 3 to 12 months.

- Insufficient correlation between technical parameters and clinical workflow impact.

These gaps matter because the financial impact is cumulative. A 2% to 4% rise in repeat procedures, service calls, or workflow interruptions may look manageable in isolation, but across dozens of devices and annual procurement cycles, the cost of incomplete assessment can become substantial.

Where VSM-style benchmarking adds decision value

Independent benchmarking translates engineering evidence into procurement clarity. Instead of accepting broad product claims, a benchmarking framework examines signal integrity, wear tolerance, software response consistency, environmental sensitivity, and materials performance under defined conditions. This approach helps teams compare not just what a supplier promises, but what the device repeatedly delivers.

A more reliable assessment should include at least 4 layers: certification review, laboratory performance testing, workflow simulation, and lifecycle risk estimation. If one layer is missing, decision-makers may still not know whether the device supports long-term clinical use without hidden operational compromise.

What a stronger assessment framework should examine

A high-quality medical device assessment should move beyond a checklist mindset. It should test how the device behaves under stress, how repeatable its output remains, and how likely it is to preserve clinical utility over time. This is especially important for devices used in diagnostic imaging, minimally invasive procedures, monitoring, and high-throughput laboratory settings.

Procurement teams often need a practical framework they can apply across brands and product categories. The table below outlines key assessment dimensions that help reveal real clinical risk before purchase decisions are finalized.

The key takeaway is that risk usually appears in variance, not in the headline number. A product that performs well once but fluctuates later can create more clinical and financial exposure than a device with slightly lower peak performance but tighter consistency. This is why benchmark design should focus on tolerances, repeatability, and operational context.

H4-level checks that are often missed

Operator-dependent variability

A device may appear stable in expert hands but show measurable output variation when used by staff with different training levels. A practical assessment should test at least 3 user profiles or competency levels to identify whether the device is robust enough for real staffing patterns.

Degradation after cleaning and disinfection

For endoscopes, probes, and patient-contact systems, repeated cleaning can alter optics, seals, adhesives, or sensor surfaces. Testing after 50, 100, or 250 cleaning cycles gives a far more realistic picture of usable life than new-product inspection alone.

Interoperability under hospital IT load

Digital integration failure is an underestimated source of clinical risk. If a device experiences transmission delays, incomplete data mapping, or synchronization errors during peak network usage, compliance on paper does not prevent downstream workflow disruption.

Examples of risk signals in imaging, robotics, and laboratory systems

Different device categories express clinical risk in different ways. In ultrasound systems, risk may appear as inconsistent image sharpness, reduced penetration depth, or unstable Doppler sensitivity during prolonged use. In surgical robotics, the issue may be latency spikes, instrument feedback inconsistency, or motion precision drift over repeated sessions. In laboratory systems, hidden risk often emerges in calibration shift, reagent interaction sensitivity, or throughput bottlenecks.

A meaningful assessment therefore needs category-specific metrics. The table below shows how technical benchmarking can connect performance evidence to clinical and procurement decisions.

This kind of comparison is especially useful when multiple products appear similar at the brochure level. Two systems may both claim high precision, yet one may show a latency range of 80 to 120 ms while another fluctuates from 90 to 240 ms under load. That difference can materially affect operator control, confidence, and patient safety planning.

Warning signs during evaluation

- Only peak-performance results are presented, with no repeated-cycle or long-duration data.

- Test conditions are not disclosed, making it impossible to compare like for like.

- No documentation shows how performance changes after servicing, sterilization, or software updates.

- Downtime estimates are described qualitatively rather than in hours per quarter or service events per year.

For hospital buyers, these warning signs should trigger deeper review. For MedTech startups, they highlight where stronger evidence can accelerate trust. For operators, they explain why a device that looked strong in a short demo can still become difficult in daily use.

How procurement teams can build a risk-aware purchasing process

A risk-aware process starts before final supplier comparison. Instead of waiting until tender close, procurement teams should define a 4-step review path: requirement mapping, evidence screening, benchmark validation, and lifecycle costing. This makes it easier to identify whether a lower purchase price is offset by higher maintenance frequency, unstable performance, or integration complexity.

For many organizations, the best approach is to split evaluation into technical gates. Gate 1 can verify regulatory and documentation readiness. Gate 2 can review clinical-use parameters such as response time, image consistency, measurement tolerance, or cleaning durability. Gate 3 can estimate 3-year to 5-year support burden, including consumables, calibration, software updates, and expected downtime.

A practical checklist for buyers and decision-makers

- Define no more than 8 to 12 critical parameters that directly affect clinical workflow and safety.

- Request test data over multiple cycles, not just one-time demonstration outputs.

- Ask how performance changes after cleaning, maintenance, software patching, or environmental variation.

- Compare service intervals, spare part lead times, and expected downtime in measurable terms.

- Use an independent benchmark or third-party technical review where product complexity is high.

This process is increasingly important in cross-border sourcing, where documentation standards may be familiar but manufacturing consistency is less visible. A vendor may satisfy headline compliance expectations, yet differences in component quality control, firmware stability, or post-market support can affect long-term deployment success.

What operators should contribute to the assessment

Operators should not be brought in only at the training stage. Their input is essential during evaluation because usability issues often reveal hidden clinical risk. A system that requires 6 extra clicks per procedure, inconsistent probe positioning, or a longer recalibration routine may reduce throughput and raise fatigue over time, even if the core technology is compliant.

A good practice is to involve at least 2 to 4 end users in scenario testing. Their role is to identify whether alarms are meaningful, interfaces support fast decisions, and physical design aligns with routine clinical tasks. Small workflow frictions can have outsized effects when repeated across hundreds of procedures each month.

FAQ: key questions about medical device assessment and clinical risk

How do you know if MDR certification is not enough for procurement?

If the assessment package lacks repeated-use data, environmental testing, maintenance impact analysis, or workflow validation, certification alone is not enough. MDR establishes an essential compliance baseline, but procurement decisions should also examine use-case-specific evidence over realistic timeframes such as 3 months, 12 months, or defined cycle counts.

Which metrics matter most when comparing complex medical equipment?

The answer depends on the device category, but high-value metrics often include response latency, measurement tolerance, output consistency, cleaning durability, maintenance interval, and system downtime. For imaging tools, resolution consistency and signal uniformity are often more informative than a single top-line image specification.

How long should an independent benchmark review take?

A focused review can often be completed in 2 to 4 weeks for a single product category if technical files and test samples are available. More complex reviews involving multiple environments, repeated cleaning cycles, interoperability checks, or comparative scoring may require 4 to 8 weeks. Speed matters, but incomplete review is usually more expensive than a short delay.

What is the biggest mistake in hospital equipment standards review?

The most common mistake is treating equipment standards as a paperwork exercise. Real-world assessment should connect standards to operating context: who uses the device, how often, in what environment, with what cleaning frequency, and under what service constraints. Without that context, even technically compliant equipment can carry hidden clinical and financial risk.

When a medical device assessment misses real clinical risk, the cost is rarely limited to procurement error. It can affect patient workflow, maintenance budgets, training requirements, equipment uptime, and long-term confidence in the supply chain. Stronger assessment means validating not just what a device is allowed to claim, but what it can repeatedly deliver in real clinical settings.

VitalSync Metrics (VSM) helps healthcare buyers, MedTech teams, and laboratory planners convert technical complexity into measurable decision criteria. By benchmarking performance, compliance readiness, and lifecycle reliability, VSM supports sourcing decisions built on engineering evidence rather than promotional language. If you need a more defensible way to evaluate medical equipment standards, compare competing platforms, or identify hidden procurement risk, contact us to discuss a tailored benchmarking approach and explore the right solution for your next evaluation cycle.

- EMS

- ESS

- PPE

- EdTech

- procurement

- AR

- supply chain

- Cement

- material fatigue

- benchmarking

- MDR certification

- value-based procurement

- hospital equipment standards

- medical device assessment

- medical equipment standards

- technical integrity

- medical equipment

- technical benchmarking

- material fatigue

- signal-to-noise ratio

- digital integration

- supply chain

- long-term reliability

- medical device certification

- healthcare compliance

- Diagnostic Imaging

- Robotics

- Ultrasound Metrics

- endoscope image resolution benchmark

- surgical robot latency test

Recommended News

- 2026.04.21Medical equipment standards keep shifting across export marketsDr. Julian Rossi (RehabTech Specialist)

- 2026.04.21Surgical robot latency test results can mislead without contextDr. Alistair Thorne (Senior Biomedical Engineer)

- 2026.04.21How much surgical robot latency test time is too much?Dr. Alistair Thorne (Senior Biomedical Engineer)

The VitalSync Intelligence Brief

Receive daily deep-dives into MedTech innovations and regulatory shifts.