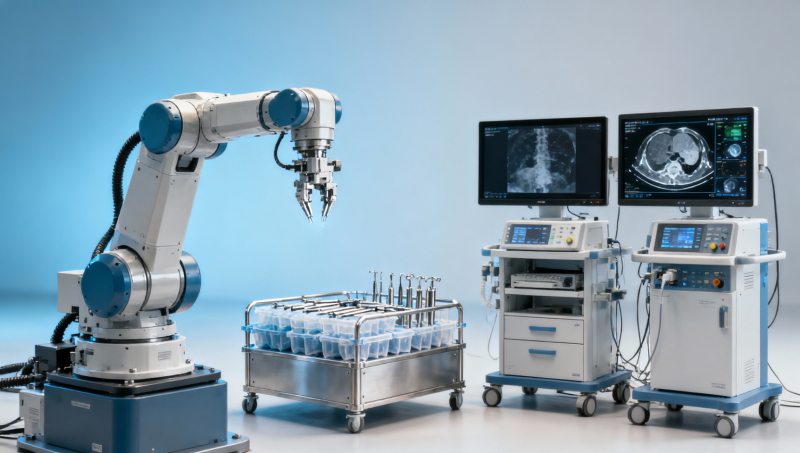

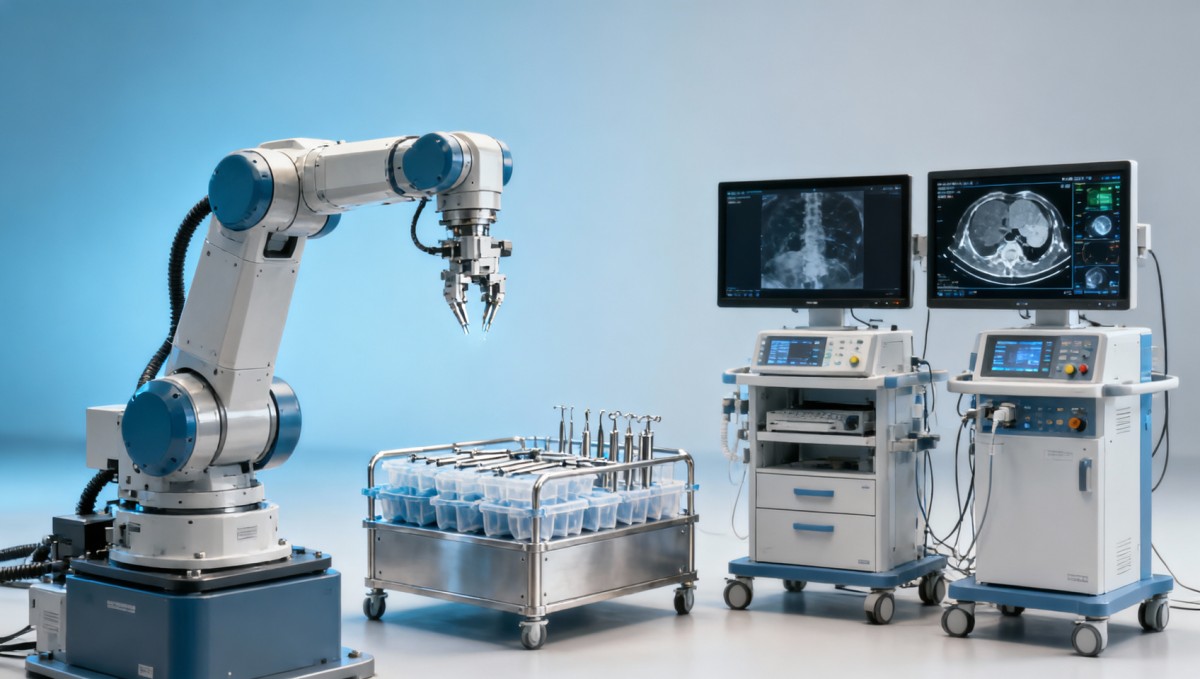

Medical technology evaluation before adopting surgical robots

Before hospitals adopt surgical robots, rigorous medical technology evaluation is essential to separate innovation from risk. For global decision-makers, effective medical device testing, healthcare benchmarking, and medical regulatory compliance under MDR IVDR are critical to confirming medical device reliability, clinical device certification, and long-term value. This guide explains how medical technology assessment supports safer procurement, stronger healthcare digital integration, and confident adoption.

Surgical robotics can improve precision, ergonomics, and workflow standardization, but adoption decisions cannot rest on vendor demonstrations alone. Procurement teams, operators, biomedical engineers, and executive leaders need a repeatable framework that tests technical performance, verifies compliance pathways, and estimates the total impact over 5–10 years of use.

For organizations navigating value-based procurement, the core question is not whether a robot looks innovative. The real question is whether the system performs reliably under clinical stress, integrates with digital infrastructure, and sustains measurable value across training, maintenance, service continuity, and regulatory obligations.

Why medical technology evaluation matters before surgical robot adoption

A surgical robot is not a single device. It is a complex platform that combines electromechanical subsystems, software, imaging interfaces, sterile accessories, service protocols, cybersecurity controls, and operator training requirements. That means medical technology evaluation must cover more than clinical claims. It should assess at least 4 dimensions: technical integrity, workflow fit, compliance readiness, and lifecycle reliability.

Hospitals often face a gap between showroom performance and real operating room conditions. In a controlled demo, setup may take 15–20 minutes. In live practice, turnover delays, staff rotation, sterilization logistics, and room constraints can push the same process to 30–45 minutes. Without structured healthcare benchmarking, procurement teams may underestimate operational friction and overestimate utilization rates.

Medical device testing is also essential because robotic systems introduce multiple failure points. These may include arm positioning drift, software latency, accessory wear, camera degradation, haptic inconsistency, or incomplete integration with hospital information systems. Even a small deviation such as repeated calibration drift beyond a narrow tolerance window can affect confidence, throughput, and long-term service costs.

For executive teams, evaluation protects capital allocation. A high-cost platform may appear justified based on procedure volume forecasts, yet the business case changes quickly if disposable costs rise by 10%–20%, annual maintenance exceeds expectations, or the system is underused because only 2 or 3 surgeons are fully credentialed. Strong assessment reduces these hidden adoption risks before contract signature.

Core evaluation objectives

- Verify whether device performance in repeated testing matches the manufacturer’s stated operating range.

- Confirm whether the platform can support target procedures with realistic staffing and room turnover constraints.

- Assess compliance evidence for MDR/IVDR-related documentation, software traceability, and post-market obligations where relevant.

- Estimate total cost of ownership across 3-year, 5-year, and 7-year planning horizons.

Where hospitals commonly misjudge readiness

The most common error is treating surgical robot selection as a capital equipment purchase rather than a system-level transformation project. In practice, readiness depends on infrastructure, sterilization pathways, surgeon learning curves, nursing protocols, data security review, and vendor service response times that should ideally remain within 24–72 hours for critical failures.

A practical evaluation framework: from technical testing to procurement approval

An effective assessment framework should move through structured stages instead of a single committee meeting. Most organizations benefit from a 5-step model that starts with needs definition and ends with monitored rollout. This approach gives procurement teams traceable evidence and helps users compare systems on engineering merit, not marketing language.

The first stage is clinical and operational scoping. Hospitals should define procedure categories, expected annual volume, room configuration constraints, staffing availability, and digital integration requirements. If projected annual use is below a practical threshold, such as 100–150 procedures for a single specialty, the utilization model may require deeper review before investment.

The second stage is benchmarked technical testing. This includes repeatability, precision stability, image transmission reliability, instrument change time, system boot duration, alarm management, and cleaning workflow verification. Testing should be repeated over multiple sessions rather than a single day, because intermittent issues often appear only after 20–30 cycles.

The third and fourth stages cover compliance review and economic assessment. Here, the team validates technical files, software change control, service documentation, training obligations, and traceability expectations. The final stage is pilot deployment with measurable acceptance criteria, allowing hospitals to confirm whether real-world performance matches pre-purchase assumptions.

Recommended 5-step evaluation path

- Define clinical use case, target specialties, and annual utilization forecast.

- Run medical device testing under repeat-use conditions and record technical deviations.

- Review medical regulatory compliance documents, software records, and service commitments.

- Model acquisition, consumables, maintenance, and staffing costs over 3–7 years.

- Launch a limited pilot with operator feedback, workflow metrics, and acceptance thresholds.

The table below shows a useful decision structure for multidisciplinary review teams evaluating surgical robotics before approval.

This structure helps teams compare candidates using measurable checkpoints rather than subjective impressions. It also creates an audit trail that supports board-level approval and future vendor performance reviews.

Key technical and regulatory criteria procurement teams should examine

Procurement decisions should balance engineering evidence with regulatory clarity. A hospital may be satisfied with usability, but if software validation records are incomplete, update control is unclear, or post-market responsibilities are poorly defined, the platform can create long-term compliance exposure. Medical regulatory compliance is not a paperwork exercise; it is part of operational risk control.

From a technical standpoint, teams should review motion stability, instrument endurance, interface reliability, emergency stop behavior, fault recovery logic, and environmental operating conditions. Even where vendors do not disclose every design parameter, buyers can still require objective test records, maintenance schedules, and documented calibration procedures with clearly defined intervals such as every 6 months or after a specified cycle count.

Under MDR and related European compliance expectations, hospitals and distributors increasingly need better documentation discipline. That includes device identification, software version control, field safety communication pathways, complaint handling procedures, and evidence that modifications are managed through a controlled process. For digitally integrated systems, cybersecurity governance is equally important, especially when the robot connects to imaging, EMR, or remote service tools.

Clinical device certification and medical device reliability should be interpreted together. Certification may support market access, but it does not replace independent healthcare benchmarking. Procurement leaders should ask how performance changes after repeated use, accessory replacement, and routine maintenance, because lifetime consistency often determines the true value of a robotic system.

Critical document and performance checks

- Preventive maintenance schedule, including service intervals and parts replacement triggers.

- Software update policy with rollback controls, validation evidence, and downtime planning.

- Instrument life tracking, reprocessing instructions, and disposal requirements for consumables.

- Cybersecurity review covering user access, patch process, network segmentation, and audit logs.

The following table can be used as a practical compliance and reliability screening matrix during procurement review.

When this matrix is used early, procurement teams can identify documentation gaps before negotiations are finalized. That improves leverage, strengthens internal governance, and reduces the chance of post-installation disputes about support scope or performance expectations.

Benchmarking real-world performance, workflow integration, and total cost of ownership

Healthcare benchmarking should measure how a surgical robot behaves inside a working hospital environment, not just in vendor-led sessions. Real-world benchmarking typically combines simulated procedures, pilot use, operator scoring, room utilization review, and maintenance analysis. This gives stakeholders a more complete picture of whether the technology can be sustained over time.

Workflow integration is especially important in digitally maturing hospitals. A robot may be technically advanced, yet still slow down operations if image routing, patient data transfer, scheduling synchronization, or reporting workflows remain disconnected. Even a 5–10 minute delay per case becomes significant across 4–6 cases per day and can affect OR planning, staffing, and revenue recovery.

Total cost of ownership should include at least 6 cost layers: acquisition, installation, disposable instruments, preventive maintenance, corrective service, and training refresh. Some teams also add downtime risk, cybersecurity support, and room adaptation costs. Looking only at purchase price can distort decision quality, particularly when recurring annual costs are substantial.

For organizations focused on healthcare digital integration, benchmarking should also cover data interoperability and service diagnostics. Can the platform support secure log extraction, event analysis, and update governance? Can technical teams identify performance drift before it affects patient care? These factors increasingly separate scalable platforms from expensive standalone systems.

Practical benchmarking metrics

- Setup duration across 3–5 consecutive sessions.

- Instrument exchange time under routine staffing conditions.

- Mean downtime per month and escalation response window.

- Operator competency progression after 5, 10, and 20 procedures.

- Data transfer success rate and compatibility with existing digital systems.

A realistic ownership question

If a robot requires frequent consumable replacement, extended setup support, and multiple training rounds for rotating staff, the long-term economics may differ sharply from the original proposal. Benchmarking turns those assumptions into measurable planning inputs and helps leaders evaluate whether projected return depends on optimistic utilization that may never be reached.

Implementation risks, common mistakes, and how to build a safer adoption plan

Even when the technology is promising, poor implementation can undermine outcomes. One common mistake is compressing evaluation, contracting, installation, and training into a short 4–6 week window. For most hospitals, a safer timeline includes staged validation, room preparation, multidisciplinary training, and pilot monitoring over 8–12 weeks or longer depending on procedure complexity.

Another frequent issue is underestimating operator variation. A system that works well for one lead surgeon may still generate inconsistent results across different users. That is why assessment should include surgeons, scrub staff, biomedical engineers, and procurement reviewers. Broader participation uncovers workflow friction that may not appear in a single-user evaluation.

Hospitals should also avoid contracts that lack measurable service obligations. Downtime, software patching, training refresh, and accessory availability should be described clearly. In practical terms, it is useful to define escalation tiers, expected response windows, spare-part access, and acceptance criteria for restoration after a critical fault.

This is where an independent benchmarking and technical review partner can add value. Organizations such as VitalSync Metrics focus on engineering truth rather than promotional positioning. By translating manufacturing parameters, test evidence, and lifecycle behavior into standardized evaluation documents, decision-makers gain a clearer basis for procurement approval and long-term supplier management.

High-priority risk controls before go-live

- Set acceptance criteria for technical performance, not just usability impressions.

- Validate room workflow, sterilization logistics, and digital interfaces before first live case.

- Require documented service and training commitments in the procurement contract.

- Track pilot metrics for at least the first 10–20 procedures to identify drift or bottlenecks.

FAQ: what decision-makers ask most often

How long should a pre-adoption evaluation take? For a complex robotic system, a realistic initial assessment often spans 4–8 weeks, while full pilot validation may extend to 8–12 weeks depending on specialties, room readiness, and compliance review depth.

What should procurement prioritize first? Start with use-case fit, reliability evidence, and service resilience. If those 3 factors are weak, attractive features or lower quoted pricing rarely compensate for operational risk.

Is certification enough to approve purchase? No. Clinical device certification supports legitimacy, but hospitals still need medical device testing, healthcare benchmarking, and lifecycle review to determine whether the system is a good operational and economic fit.

Who benefits most from independent assessment? Procurement directors, OR managers, MedTech innovators preparing market entry, and executives responsible for value-based purchasing all benefit when technical claims are translated into neutral, comparable evidence.

Medical technology evaluation before adopting surgical robots should be structured, evidence-based, and multidisciplinary. When hospitals combine medical device testing, healthcare benchmarking, compliance review, and lifecycle cost analysis, they gain a more accurate view of medical device reliability, workflow impact, and long-term value.

For teams seeking a clearer path from engineering data to procurement confidence, VitalSync Metrics provides an independent lens on technical integrity, regulatory readiness, and performance benchmarking across the MedTech supply chain. If your organization is comparing surgical platforms, validating supplier claims, or planning a value-based acquisition, now is the right time to get a tailored evaluation framework. Contact us to discuss your requirements, request a customized benchmarking plan, or explore more healthcare technology assessment solutions.

- value-based procurement

- medical technology assessment

- MedTech supply chain

- medical regulatory compliance

- healthcare technology assessment

- medical device reliability

- clinical device certification

- regulatory compliance

- medical technology

- technical integrity

- MedTech supply

- MDR IVDR

- digital integration

- supply chain

- engineering truth

- manufacturing parameters

- global decision-makers

- healthcare digital integration

- medical technology evaluation

- healthcare benchmarking

- medical device testing

- healthcare technology

- Robotics

Recommended News

- 2026.06.02Smart Street Lighting Cost-Effective Solutions: Controls, LEDs, and ROILydia Vancini (Regulatory Compliance Lead)

- 2026.06.02Stepper Motors Selection Guide: Torque, Step Angle, Drivers, and Load FitDr. Alistair Thorne (Senior Biomedical Engineer)

- 2026.06.02UV Water Sterilizers for Small Facilities: Flow Rate, Dose, and Lamp LifeDr. Alistair Thorne (Senior Biomedical Engineer)

The VitalSync Intelligence Brief

Receive daily deep-dives into MedTech innovations and regulatory shifts.