Where medical equipment standards create hidden redesign costs

Medical equipment standards often look like safeguards, yet they can trigger costly redesigns when healthcare compliance, MDR certification, and real-world medical device assessment are misunderstood early in development. For buyers and builders comparing Physical Therapy Tech, Ultrasound Metrics, or Surgical & Clinical Tech, this article reveals how hidden gaps in medical device certification and hospital equipment standards quietly inflate timelines, sourcing risk, and total lifecycle cost.

For procurement teams, operators, MedTech founders, and healthcare decision-makers, the real cost of a standard is rarely the test fee alone. It appears later in enclosure changes, firmware revisions, material substitutions, retesting cycles, supplier replacement, and delayed market entry. In many projects, a redesign that starts with a “small compliance adjustment” can add 8–16 weeks and affect 3–5 downstream workstreams at once.

This matters even more in a market shaped by MDR, IVDR, value-based procurement, and higher expectations for technical transparency. A device may perform well in a demo, yet still fail to align with hospital equipment standards, usability requirements, electromagnetic compatibility limits, or documentation depth expected during medical device certification review. The result is not just regulatory friction; it is budget leakage across the entire supply chain.

VitalSync Metrics (VSM) focuses on this gap between specification claims and engineering reality. By benchmarking medical technologies against measurable performance and compliance criteria, VSM helps global buyers and builders identify where a standard creates legitimate safety value and where poor interpretation creates preventable redesign cost. Understanding that distinction early is one of the most practical ways to reduce lifecycle risk.

How hidden redesign costs begin before formal certification

Most hidden redesign costs do not start in the test lab. They begin in product definition, when teams treat medical equipment standards as a late-stage approval task rather than a design input. In practice, standards influence electrical architecture, materials, software logs, labeling, cleaning methods, packaging, transport protection, and intended-use wording from day 1.

A common example appears in wearable monitoring, ultrasound accessories, and rehabilitation devices. Early prototypes may be optimized for sensitivity, portability, or user comfort, yet not for leakage current thresholds, ingress protection expectations, sterilization compatibility, or EMC resilience. If these factors are checked only after verification testing, the redesign may affect housings, connectors, shielding layers, battery layout, and even PCB routing.

The cost impact is cumulative. A single failed compliance criterion often triggers 4 linked expenses: engineering hours, new validation tests, supplier change orders, and launch delay. For a growing MedTech company, losing one procurement cycle in a hospital tender can be more expensive than the redesign itself, especially when purchase committees work on quarterly or semiannual schedules.

Another hidden driver is mismatch between claimed use and actual use. A device positioned as suitable for surgical, clinical, or bedside deployment may face stricter expectations than the product team anticipated. Once the intended environment expands from low-risk outpatient use to mixed-care settings, cleaning chemistry, cable durability, drop tolerance, and alarm behavior often require rework.

For procurement teams, this is why technical due diligence should start before final quotation comparison. Low upfront pricing can conceal high adaptation cost if certification scope, documentation maturity, and real-world performance margins are weak. What appears to be a 7% purchase saving can turn into a 20%–30% lifecycle penalty after retrofits, operator workarounds, and service interventions.

Four early assumptions that usually trigger redesign

- Assuming laboratory pass criteria equal hospital readiness, even though hospital equipment standards also involve cleaning workflow, operator behavior, and integration constraints.

- Treating MDR or IVDR documentation as a paperwork layer, when it often exposes missing design traceability, risk controls, and post-market planning.

- Selecting materials based only on cost and availability, without checking fatigue, chemical resistance, or repeated disinfection performance over 500–1,000 cycles.

- Using supplier declarations as final proof, without independent benchmarking of signal quality, tolerance drift, or electrical stability under real operating conditions.

Why “almost compliant” is expensive

In healthcare technology, being close to a threshold is often not commercially safe. A sensor that passes accuracy targets under ideal bench conditions but drifts outside tolerance after transport vibration or thermal cycling is still a redesign risk. So is a cable assembly that performs at 200 mating cycles when procurement teams expect 1,000 cycles over a device’s service life.

This is where independent assessment adds value. Benchmarking performance margins instead of only pass/fail outcomes gives buyers a clearer view of whether a device is robust enough for scaling across multiple sites, operators, and usage intensities. That insight helps prevent standards-related redesign from appearing after installation.

Which standards gaps create the highest downstream cost

Not all compliance gaps are equal. Some create minor document revisions, while others force major engineering change. The most expensive gaps usually sit at the intersection of performance, safety, and use environment. These are especially relevant in Physical Therapy Tech, Ultrasound Metrics, and Surgical & Clinical Tech, where devices interact directly with patients, operators, and other equipment.

The table below summarizes common hidden cost sources and why they escalate. It is designed for procurement teams and technical evaluators who need to distinguish between manageable corrective actions and fundamental redesign risk before supplier selection.

The key pattern is that redesign cost rises sharply when the issue affects both hardware and documentation. A material substitution, for example, is not only a sourcing event. It can require biocompatibility review, cleaning validation updates, packaging checks, and revised operator instructions. That means one standards gap can touch 5 or more approval artifacts.

The same logic applies to software-enabled equipment. If device behavior in alarms, user access, or data logging does not match intended clinical workflow, the correction is not just a firmware patch. It may also require revised risk analysis, fresh usability evidence, and integration retesting. For hospital buyers, this is why pre-award technical verification matters as much as price comparison.

High-risk areas by application segment

Physical Therapy Tech often faces hidden redesign pressure around mechanical fatigue, surface cleaning tolerance, and repetitive-use durability. Devices used 20–40 times per day can expose component wear faster than a bench qualification suggests. Procurement teams should request cycle-life assumptions, maintenance intervals, and replacement part strategy during evaluation.

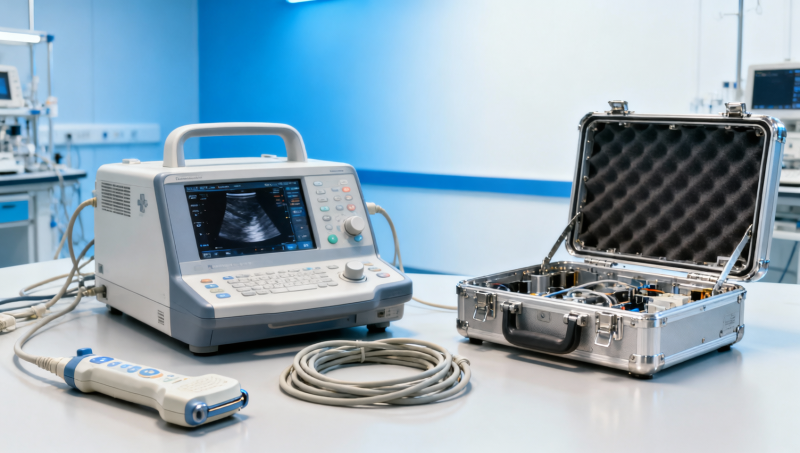

Ultrasound-related systems frequently face risk in transducer handling, signal stability, cable strain relief, and image consistency across usage temperatures. A small decline in signal-to-noise ratio may not stop product launch, but it can change diagnostic confidence and trigger rework in shielding or front-end electronics.

Surgical & Clinical Tech usually carries tighter scrutiny on cleaning validation, accessory compatibility, electrical safety in mixed-equipment rooms, and workflow reliability. Here, hidden redesign often comes from the use environment, not just the device itself.

How buyers and builders should evaluate compliance before committing

The most effective way to avoid hidden redesign cost is to evaluate compliance as a layered engineering question. Buyers should not ask only, “Is the device certified?” They should ask whether the device, evidence package, and operating assumptions remain stable when deployed across actual hospital workflows, cleaning routines, and maintenance cycles.

For MedTech developers, that means building a review framework before design freeze. For procurement teams, it means using structured technical checkpoints before final award. In both cases, the objective is the same: detect weak margins while changes are still affordable, ideally before tooling, packaging finalization, or multi-site rollout planning.

The matrix below can be used during sourcing, technical due diligence, or internal design review. It focuses on practical indicators that reduce the chance of post-selection redesign.

A useful rule is to score each dimension on a 1–5 scale and flag anything below 3 for deeper review. If two or more dimensions score low, the supplier may still be viable, but only with a controlled implementation plan and documented mitigation steps. This is particularly important for cross-border sourcing, where quality records and change control practices may vary significantly.

A five-step review process

- Confirm intended use, user profile, and care setting in exact wording, not marketing language.

- Review evidence for performance margin under normal, stressed, and repeated-use conditions.

- Check whether MDR or IVDR documentation is design-linked, current, and supplier-supported.

- Validate cleaning, maintenance, and service assumptions with operators and biomedical teams.

- Benchmark competing options using the same metrics, not different vendor-selected test narratives.

Where VSM adds practical value

VSM’s role in this process is to convert technical claims into comparable evidence. Independent benchmarking can identify whether a device’s apparent compliance is supported by stable engineering margins or weakened by optimistic assumptions. For procurement leaders, that improves negotiating position. For developers, it reduces the chance of discovering a costly mismatch after capital has already been committed.

In sectors where 2–3 shortlisted vendors appear similar on paper, the differentiator is often not the nominal standard reference, but how well the product performs near thresholds and under repeated operational stress. That is precisely where hidden redesign cost usually hides.

Implementation, service planning, and common misconceptions

Even when a product clears certification review, hidden redesign cost can still emerge during implementation. Hospitals, laboratories, and multi-site care groups introduce variables that are difficult to simulate perfectly in development: storage humidity, cable handling habits, operator turnover, mixed disinfection chemicals, and intermittent power quality. A device that passed formal assessment may still require configuration, accessory, or workflow adjustment in its first 30–90 days of use.

This is why service planning should be treated as part of compliance strategy. If maintenance intervals are undefined, spare parts are single-source, or calibration requires long downtime, the device may satisfy certification but still fail procurement expectations for reliability and continuity. In value-based purchasing environments, these operational weaknesses matter as much as acquisition cost.

One recurring misconception is that redesign only concerns manufacturers. In reality, buyers also absorb redesign cost when an installed device needs local adaptation, revised SOPs, operator retraining, or replacement consumables. A procurement team may sign one contract, yet inherit 3 additional internal tasks involving biomedical engineering, infection control, and clinical education.

Another misconception is that “certified” means “universally suitable.” Certification confirms conformity within a defined scope, not perfect fit for every department, workflow, or environmental condition. The closer a purchase decision is tied to specific use scenarios, the lower the risk of costly post-award adjustment.

Common procurement and deployment mistakes

- Comparing vendors only on unit price and certificate presence, without checking maintenance hours per quarter or expected consumable burden.

- Ignoring cleaning chemistry compatibility, which can shorten enclosure life or degrade sensor surfaces within 6–12 months.

- Assuming one test sample represents production consistency, despite differences in supplier lots, assembly control, or firmware versioning.

- Rolling out to multiple sites before confirming workflow fit in 1 pilot department or 1 reference lab environment.

FAQ for procurement teams and technical evaluators

How long can a standards-related redesign delay a medical device project?

Minor documentation corrections may take 2–4 weeks. Hardware, material, or EMC-related redesign can easily extend a project by 8–16 weeks, especially if retesting and supplier qualification are required. If packaging, labeling, and risk files must also be updated, the timeline may stretch further.

Which buyers are most exposed to hidden redesign cost?

Hospital procurement directors, laboratory architects, and buyers managing cross-border sourcing face the highest exposure. They often compare multiple technologies and rely on supplier documentation that may not reflect actual use conditions. Multi-site deployments amplify this risk because small compliance weaknesses become larger operational issues at scale.

What is the most important question to ask before supplier selection?

Ask for evidence of performance margin under real-world use conditions, not only certificate status. A supplier should be able to explain how the device behaves under repeated cleaning, transportation stress, operator variation, and service intervals. If the answer is vague, redesign risk is likely still unresolved.

Can independent benchmarking reduce procurement risk?

Yes. Independent benchmarking helps buyers compare products using the same criteria and reveals where compliance claims are strong, narrow, or unsupported by operating margin. This is especially useful when shortlist options differ only slightly in price but significantly in long-term reliability and documentation maturity.

Medical equipment standards are essential, but they only protect budgets and patients when they are translated correctly into design, sourcing, validation, and service planning. Hidden redesign costs usually come from gaps between claimed compliance and actual operating reality, especially in areas such as EMC resilience, cleaning compatibility, usability, documentation maturity, and lifecycle support.

For organizations evaluating Physical Therapy Tech, Ultrasound Metrics, or Surgical & Clinical Tech, the smartest move is to examine performance margin and deployment fit before contracts are finalized. VitalSync Metrics (VSM) supports that process by turning technical complexity into measurable comparison, helping teams reduce sourcing uncertainty and make evidence-based decisions with greater confidence. To explore a tailored benchmarking approach, get in touch with VSM, request a custom evaluation framework, or learn more about solution options aligned with your compliance and procurement goals.

- EMS

- ESS

- PPE

- healthcare technology

- EdTech

- procurement

- AR

- supply chain

- Cement

- benchmarking

- MDR certification

- value-based procurement

- hospital equipment standards

- medical device assessment

- medical equipment standards

- medical equipment

- hospital procurement

- signal-to-noise ratio

- supply chain

- long-term reliability

- technical verification

- biomedical engineering

- medical device certification

- healthcare technology

- healthcare compliance

- Ultrasound Metrics

- Physical Therapy Tech

- Packaging

Recommended News

- 2026.04.23Low-Altitude Economy Enters Scale-Up Year: eVTOL Infrastructure & Vital Sign SensingLydia Vancini (Regulatory Compliance Lead)

- 2026.04.23Lianyirong, ABC Shanghai Dongfang Hub Branch Close Two e-NI TransactionsDr. Hideo Tanaka (Imaging Systems Analyst)

- 2026.04.23China’s April 2026 LPR Set at 3.0% (1Y) and 3.5% (5Y+): Implications for Medical Device Export Financing

The VitalSync Intelligence Brief

Receive daily deep-dives into MedTech innovations and regulatory shifts.