Surgical Robot Latency Test Benchmarks: What to Compare Before Approval

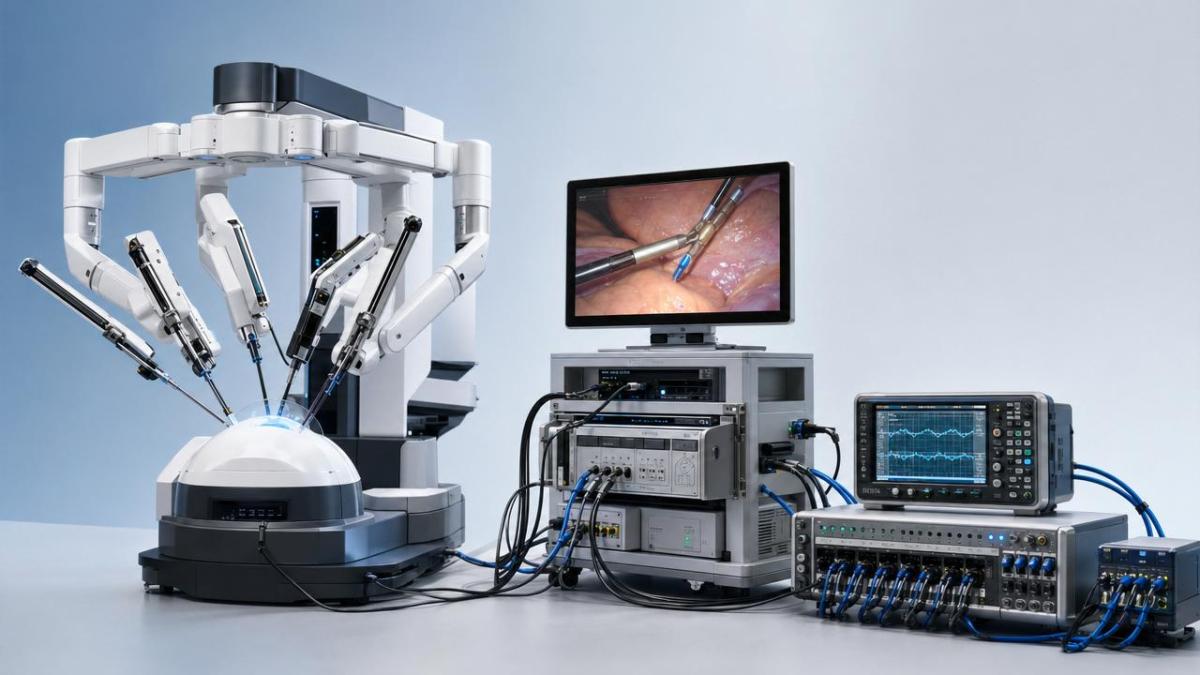

Before a surgical system earns regulatory confidence, buyers and reviewers must look beyond vendor claims and examine measurable performance. A rigorous surgical robot latency test can reveal whether control response, video delay, and network stability meet real clinical demands. For procurement leaders and MedTech teams, comparing the right latency benchmarks before approval is essential to reducing risk, strengthening compliance, and validating long-term operational reliability.

Why latency benchmarking has become a stronger approval signal

The market context around robotic surgery is changing quickly. Hospitals are under pressure to justify capital expenditure through measurable outcomes, while regulators increasingly expect objective performance evidence rather than broad marketing language. In that environment, a surgical robot latency test is no longer a technical side note. It has become a practical decision tool for approval teams, procurement committees, and engineering reviewers trying to separate mature platforms from systems that still carry hidden operational risk.

Several shifts explain this change. First, surgical robotics now depends on more interconnected subsystems than before: motion control, imaging, middleware, foot pedals, haptic interfaces, networking, and data logging. Second, the move toward digital operating rooms means latency can no longer be assessed as a single number. Third, value-based procurement has raised the bar for proof. Buyers want evidence that response timing remains stable not only in ideal demos, but also under realistic loads, software updates, and repeated use.

For enterprise decision-makers, this means pre-approval review is moving from “Does the robot work?” to “How consistently does it respond under expected clinical conditions?” That change matters because latency is tied to surgeon confidence, precision, workflow continuity, and incident prevention. A slow or unstable response profile may not appear dramatic in a sales presentation, but it can become highly relevant during delicate manipulation, camera repositioning, or instrument exchange.

The trend is not lower latency alone, but more comparable latency evidence

An important industry signal is that stakeholders are asking better comparison questions. Instead of accepting a vendor statement such as “ultra-low delay,” technical reviewers are asking how that result was measured, under what conditions, and which path the delay includes. A high-quality surgical robot latency test should define whether it measures controller-to-actuator delay, end-to-end visual delay, network transmission delay, or complete surgeon-input-to-instrument-motion delay.

This is where many approval discussions become sharper. Two systems may both claim acceptable latency, yet one may exclude video processing or ignore jitter under network stress. Another may report only average delay and hide worst-case spikes. For procurement teams, these differences are not academic. They affect usability, training burden, maintenance planning, and ultimately the confidence of clinical leadership.

The practical implication is clear: the best surgical robot latency test is not simply the one with the lowest number, but the one with the clearest scope, repeatability, and decision value.

Which latency benchmarks deserve direct comparison

Before approval, buyers should compare latency across several dimensions rather than relying on a single benchmark headline. The most useful categories are those that connect directly to clinical performance and operational reliability.

1. End-to-end control response

This measures the elapsed time from surgeon input to robotic movement. It is often the anchor metric in a surgical robot latency test because it reflects the direct feel of the system. Ask whether the reported value includes filtering, command processing, and actuator response. Also check whether the result is measured under single-task conditions only or under simultaneous camera and instrument activity.

2. Video and visualization delay

A robot can have acceptable mechanical response yet still feel unsafe if the visual feedback path lags. Imaging delay matters even more as systems move toward higher resolution, digital overlays, and AI-assisted display functions. Approval teams should compare raw camera latency, encoded video delay, display processing delay, and full image loop timing.

3. Jitter and worst-case spikes

Average latency alone can be misleading. A stable 60 ms may be easier to manage than an unstable range that swings from 30 ms to 120 ms. A surgical robot latency test should therefore report consistency metrics, percentile ranges, and outlier events. This is especially important for systems that depend on multiple software layers or hospital network traffic.

4. Recovery after load or disruption

Another benchmark gaining attention is resilience: how quickly the system returns to normal timing after stress. This may include temporary bandwidth congestion, module restarts, logging bursts, or data synchronization events. For long procedures, this recovery behavior can be more meaningful than ideal-lab latency.

5. Performance over time

Long-term stability is increasingly part of approval logic. Reviewers should ask whether latency changes after thermal buildup, repeated sterilization-related handling cycles, software patches, or aging of sensors and cables. This is where independent benchmark laboratories such as VitalSync Metrics (VSM) add value by translating engineering parameters into comparable evidence that procurement teams can use with confidence.

What is driving tighter scrutiny of the surgical robot latency test

The stronger focus on latency is not happening in isolation. It is driven by regulatory expectations, digital architecture complexity, and buyer maturity.

- Regulatory review increasingly favors traceable technical evidence, especially when software and connected functions influence performance.

- Integrated operating room environments introduce more interfaces, which increases the chance of hidden delay paths.

- Hospital procurement teams are under financial pressure to validate lifecycle value, not only headline innovation.

- Clinical stakeholders expect predictable behavior that supports training, reproducibility, and workflow confidence.

- MedTech investors and partners increasingly ask whether benchmark claims can survive external validation.

Taken together, these drivers are changing purchasing conversations. The most competitive suppliers are now those that can explain not only how their system performs, but how that performance was verified, normalized, and documented across realistic use cases.

Who feels the impact most from changing latency expectations

Not every stakeholder experiences these changes in the same way. The impact of a surgical robot latency test differs across the approval chain, and strong organizations recognize this early.

This widening impact explains why latency benchmarking is now part of broader strategic planning. It influences vendor selection, documentation design, software release management, and even commercialization timing.

Signals that a benchmark package is strong enough for serious review

For decision-makers, the challenge is not merely asking for more data, but asking for data that improves judgment. A credible surgical robot latency test package usually shows several strengths.

- Clear definition of each latency path and what is included or excluded.

- Repeatable bench setup with documented instrumentation and environmental conditions.

- Results across normal, peak, and fault-adjacent operating scenarios.

- Distribution metrics such as variance, percentiles, and spike frequency.

- Evidence of software version control and change impact assessment.

- Independent review or third-party benchmarking where feasible.

Weak packages, by contrast, often rely on isolated screenshots, undefined averages, or selective demo conditions. In an approval context, that kind of evidence creates ambiguity, and ambiguity usually translates into delay, internal resistance, or reduced buyer trust.

How companies should respond before the market raises the bar again

The next phase of competition will likely center on benchmark discipline as much as on robotic functionality. Companies that wait until the final approval stage to prepare a surgical robot latency test may find that fixing documentation gaps is harder than improving hardware. A better approach is to treat latency evidence as a development asset from the start.

For manufacturers, that means mapping latency-critical pathways early, setting internal acceptance bands, and stress-testing integrated workflows before external review. For procurement organizations, it means defining a comparison framework in advance so all suppliers answer the same questions. For laboratory architects and benchmarking partners, it means building methods that link engineering measurements to operational decisions rather than producing isolated technical outputs.

Practical questions to ask before approval decisions move forward

If your organization is reviewing a surgical platform, the most valuable next step is often a better question set. Consider confirming the following points before approval or shortlist selection:

- Which latency metric best reflects the real clinical workflow your team will depend on?

- Does the surgical robot latency test include video, control, network, and software layers in a clearly defined way?

- How does the system behave under stress, not just in nominal conditions?

- Are worst-case events documented, and what is their operational significance?

- Can the benchmark method be repeated by an independent technical party?

- What happens to latency after software updates, integration changes, or prolonged use?

In a market moving toward technical transparency and procurement accountability, these questions are no longer optional. They help organizations judge readiness, compare suppliers with greater confidence, and align approval decisions with real-world reliability. If your team wants to understand how these industry shifts affect a specific device pipeline or purchasing program, the priority should be to verify which latency claims are measurable, comparable, and durable over time.

Recommended News

- 2026.04.30Wholesale Medical Endoscopes China: After-Sales Questions Worth Asking EarlyDr. Alistair Thorne (Senior Biomedical Engineer)

- 2026.04.30Dental Implant Wholesale: How to Balance Margin, Quality, and Repeat OrdersDr. Alistair Thorne (Senior Biomedical Engineer)

- 2026.04.30Surgical Instrument Kits OEM: When Customization Starts Raising RiskDr. Julian Rossi (RehabTech Specialist)

The VitalSync Intelligence Brief

Receive daily deep-dives into MedTech innovations and regulatory shifts.