Surgical Robot Latency Test: What Delay Is Still Acceptable in Practice

In a surgical robot latency test, the central question is not whether delay exists, but how much delay remains clinically acceptable under real operating conditions. For technical evaluators, this means moving beyond vendor claims to examine response stability, control accuracy, and risk thresholds through measurable benchmarks. Understanding acceptable latency in practice is essential for judging system safety, usability, and procurement readiness.

Why latency testing is becoming a strategic evaluation issue

The discussion around the surgical robot latency test has shifted. A few years ago, latency was often treated as a narrow engineering variable, relevant mainly to developers and control system specialists. Today, it has become a cross-functional procurement question. Hospitals are investing in more digitally integrated operating rooms, robotic platforms are handling more delicate procedures, and regulators increasingly expect evidence that performance claims are supported by repeatable technical validation. In that environment, “low latency” is no longer an attractive marketing phrase. It is a measurable safety and usability indicator.

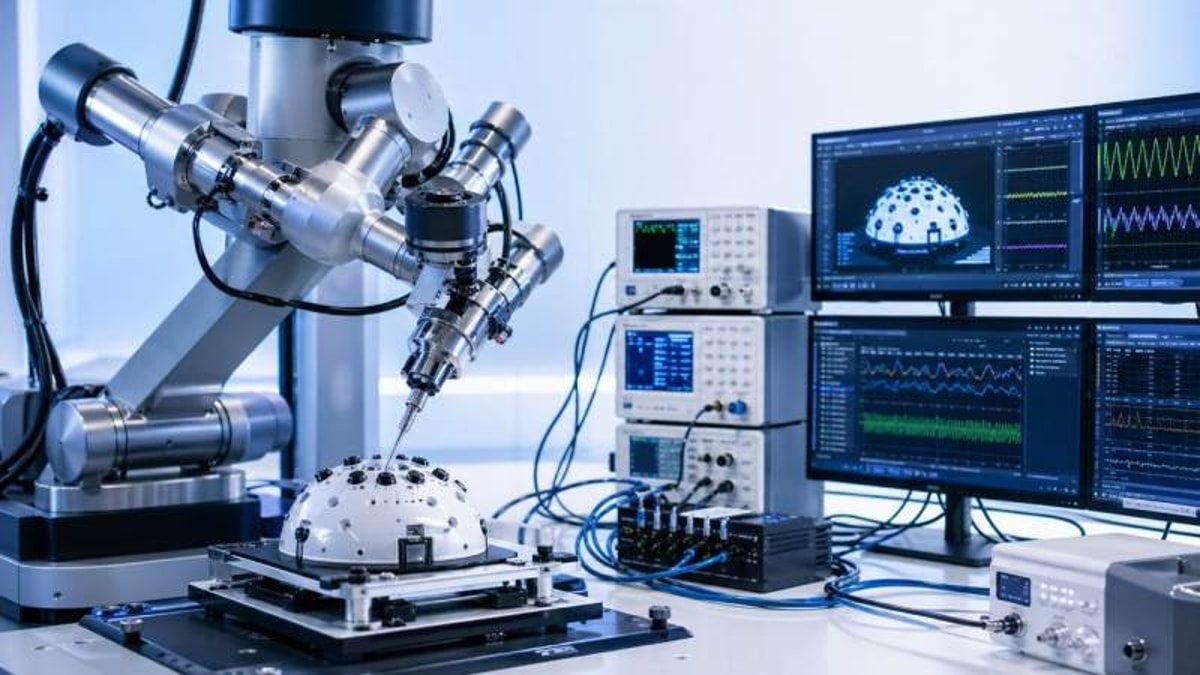

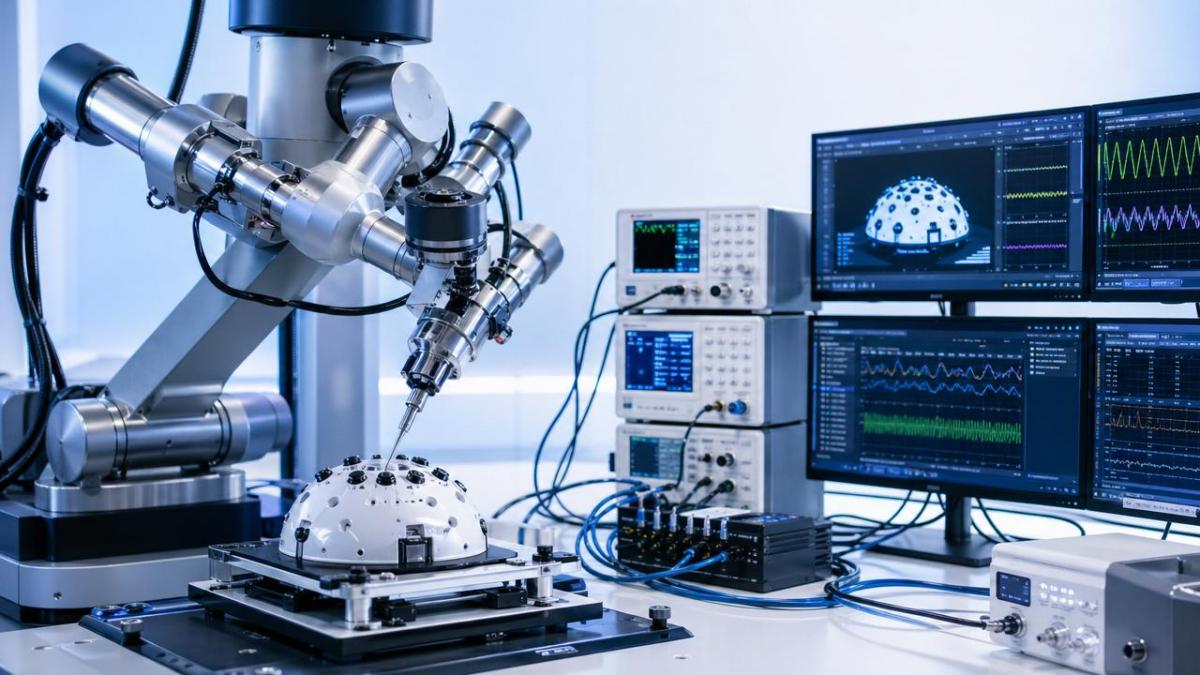

This change matters because the use context has changed. Modern surgical systems do not operate as isolated electromechanical devices. They are part of a larger data path involving imaging, control software, instrument actuation, networking, user interfaces, and, in some cases, remote collaboration layers. Each link can introduce delay, jitter, or inconsistency. As a result, acceptable performance in a bench demonstration may not translate to acceptable performance in a real procedure.

For technical evaluation teams, the practical question is therefore broader than a single latency number. A credible surgical robot latency test must reveal how delay behaves under load, during state transitions, across repeated tasks, and in situations where small timing errors can influence surgeon perception or tool placement. This is where independent benchmarking organizations such as VitalSync Metrics help buyers replace assumption with evidence.

The trend signal: acceptable delay is now judged by task sensitivity, not by headline specification alone

One of the clearest industry signals is that acceptable delay is increasingly defined by clinical task sensitivity rather than by a universal threshold. A platform used for relatively stable, repetitive manipulation may tolerate a different response profile than a system supporting fine dissection, microscale movement, or rapid camera-instrument coordination. In other words, “acceptable” is becoming scenario-based.

This is important because some suppliers still publish only best-case latency values measured under controlled conditions. Those figures may be technically correct, yet still insufficient for procurement decisions. Technical assessors now need to ask: Was the latency measured end-to-end? Was it measured during active instrument motion? What was the variability? How did the platform behave when vision processing, force feedback, or software overlays were simultaneously active?

The practical result is a more mature interpretation of the surgical robot latency test. The test is no longer about confirming that delay is “low.” It is about confirming that delay remains predictable, bounded, and clinically manageable across realistic use cases.

What is driving this change in surgical robot latency expectations

Several forces are pushing latency evaluation higher on the decision agenda. First, robotic surgery platforms are moving into more diverse clinical environments, which increases the range of tasks, operator profiles, and integration demands. Second, value-based procurement models are forcing hospitals to justify not only purchase price but also performance reliability over time. Third, regulatory and quality frameworks are placing greater emphasis on traceable evidence rather than generalized performance assertions.

Another factor is the increasing complexity of software layers. Advanced visualization, image enhancement, AI-assisted cues, and connectivity functions can all add computational burden. Even when each subsystem performs well independently, cumulative delay can become clinically relevant. This is why a surgical robot latency test should examine system architecture holistically rather than focusing only on actuator response.

There is also a human factors driver. Surgeons adapt to some delay, but adaptation has limits. What often matters in practice is not just total delay, but whether the delay feels stable enough for the operator to maintain confidence. A response that is slightly slower yet highly consistent may be more manageable than one with lower nominal delay but frequent jitter spikes. For technical evaluators, this creates a broader benchmark requirement: combine engineering metrics with usability-relevant thresholds.

How the impact differs across stakeholders

The growing importance of the surgical robot latency test affects multiple decision makers, but not in the same way. Procurement directors want confidence that the selected system will remain clinically acceptable after deployment, upgrades, and service events. Clinical engineering teams need to understand whether delays originate in hardware, software, network layers, or integration points. MedTech startups must demonstrate that their claimed responsiveness is not just technically plausible but reproducibly validated. Laboratory architects and benchmark institutions need test methods that reflect real operating conditions rather than idealized lab simplicity.

For hospital leadership, the issue is risk containment. Latency that appears marginal during installation can become more significant as more digital tools are connected into the surgical environment. For surgeons, the issue is confidence and precision. For compliance teams, the issue is evidence quality. These are different perspectives, but they converge on the same requirement: a latency assessment process that is transparent, repeatable, and clinically interpretable.

What delay is still acceptable in practice

In practice, there is no single universal number that answers every surgical robot latency test question. Acceptability depends on procedure type, motion scale, visual dependence, tool dynamics, control strategy, and user adaptation. That said, experienced evaluators increasingly look for bounded ranges and behavior patterns rather than a single pass-fail figure.

A practical benchmark framework often includes four questions. First, is the end-to-end delay low enough to avoid noticeable disruption during precision maneuvers? Second, is latency stable over time, or does it fluctuate under processing load? Third, can the system recover predictably from transient delay events? Fourth, does the observed delay create measurable degradation in target acquisition, path control, or fine adjustment tasks?

For many evaluators, the warning sign is not simply a moderately high delay value. The more serious concern is inconsistency. If the system responds differently from one movement to the next, or if visual feedback and instrument motion fall out of synchrony, the practical risk rises quickly. Therefore, acceptable delay in practice means delay that remains within a tested, repeatable range while preserving surgeon control fidelity under realistic conditions.

Signals that a vendor’s latency claim needs deeper scrutiny

Technical evaluators should be cautious when a supplier provides only average latency without percentiles, variance, or test context. A credible surgical robot latency test should disclose whether the value is controller-only, video-only, or full end-to-end. It should also clarify whether software services were active, whether motion commands were continuous or discrete, and whether the results were gathered over meaningful sample sizes.

Other warning signals include unusually clean results with no discussion of jitter, no comparison across workload scenarios, and no mapping between measured latency and task relevance. If a supplier cannot explain what happens when imaging overlays, recording functions, or network-dependent modules are activated, then the latency claim may not reflect the deployed environment. In the current market, these omissions matter because hospitals are buying integrated ecosystems, not just isolated robotic arms.

How evaluation criteria are evolving for future-ready procurement

The next phase of the surgical robot latency test will likely become more standardized, more scenario-based, and more closely tied to procurement frameworks. Evaluation teams are moving toward comparative protocols that test baseline delay, peak delay, jitter tolerance, synchronization between visual and mechanical response, and performance drift after software updates. This reflects a broader market direction: technical acceptance is becoming lifecycle-based rather than installation-based.

For organizations involved in MDR or other compliance-sensitive environments, the implication is clear. Evidence packages should connect latency metrics to risk management logic, validation protocols, and intended use boundaries. A strong dataset does not just prove that the system is fast. It shows that the system remains controllable, explainable, and safe across foreseeable operating conditions.

What technical evaluators should do now

A practical response starts with better questions. Ask for end-to-end latency definitions, not generic response claims. Request workload-based test conditions. Examine consistency metrics, not just peak marketing numbers. Align latency review with the most clinically sensitive maneuvers the platform is expected to support. If the system is intended for a highly integrated digital operating room, include interface and interoperability effects in the test plan.

It is also wise to compare internal test results with independent benchmarking whenever possible. Organizations such as VitalSync Metrics add value by converting technical observations into standardized evidence that procurement, engineering, and quality teams can all interpret. This reduces the gap between product promise and deployment reality.

Most importantly, treat the surgical robot latency test as part of a broader performance integrity review. Delay is not an isolated metric. It interacts with imaging quality, control mapping, actuation precision, software stability, and service consistency. The systems that will stand out in the coming market are not only those with low delay, but those with transparent, repeatable, clinically meaningful latency behavior.

Final judgment: focus on bounded, explainable, clinically relevant delay

The market is moving toward a more disciplined view of what acceptable delay really means. In a surgical robot latency test, the best question is no longer “Is the latency low?” but “Is the latency bounded, stable, and acceptable for the intended clinical task under realistic operating conditions?” That shift reflects deeper changes in procurement standards, digital integration, and evidence expectations across healthcare technology.

If your organization needs to judge how this trend affects product selection, supplier qualification, or validation planning, focus on a few core questions: Which task scenarios define your true latency risk? Are vendor results end-to-end and repeatable? How much variability appears under load? And does the measured delay remain clinically manageable, not just technically defensible? Those answers will do far more than a headline specification to determine whether a system is genuinely ready for practice.

Recommended News

- 2026.04.30Wholesale Medical Endoscopes China: After-Sales Questions Worth Asking EarlyDr. Alistair Thorne (Senior Biomedical Engineer)

- 2026.04.30Dental Implant Wholesale: How to Balance Margin, Quality, and Repeat OrdersDr. Alistair Thorne (Senior Biomedical Engineer)

- 2026.04.30Surgical Instrument Kits OEM: When Customization Starts Raising RiskDr. Julian Rossi (RehabTech Specialist)

The VitalSync Intelligence Brief

Receive daily deep-dives into MedTech innovations and regulatory shifts.