Surgical robot latency test results that start to affect control feel

In surgical robotics, milliseconds can shape operator confidence and procedural precision. This article examines surgical robot latency test findings and identifies the performance thresholds where delay begins to alter control feel, while linking these results to broader procurement concerns such as emc testing for medical electronics, signal to noise ratio in patient monitors, and iso 13485 audit requirements. For buyers, engineers, and clinical users, understanding latency is essential to verifying real-world safety, compliance, and usability.

For hospitals, robotics developers, and testing laboratories, latency is not just a technical number on a data sheet. It affects fine motion stability, visual-motor coordination, error recovery, and user fatigue over procedures that may last 45 minutes or more. A system that feels crisp in a demo room can become noticeably harder to control once imaging pipelines, network handshakes, filtering layers, and safety interlocks are all active.

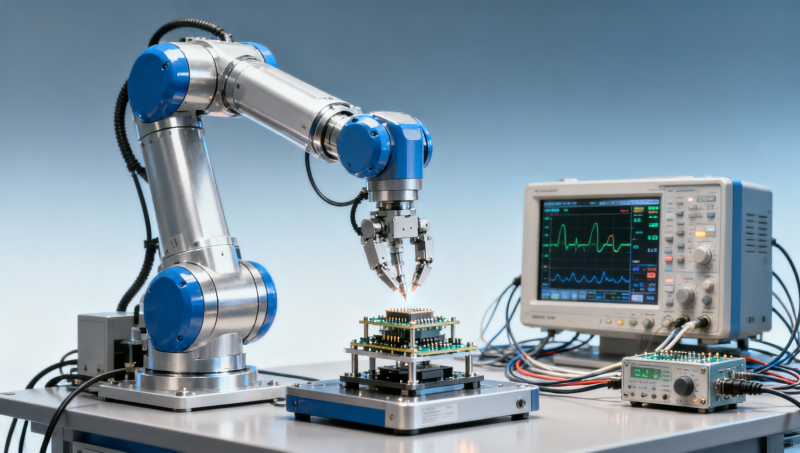

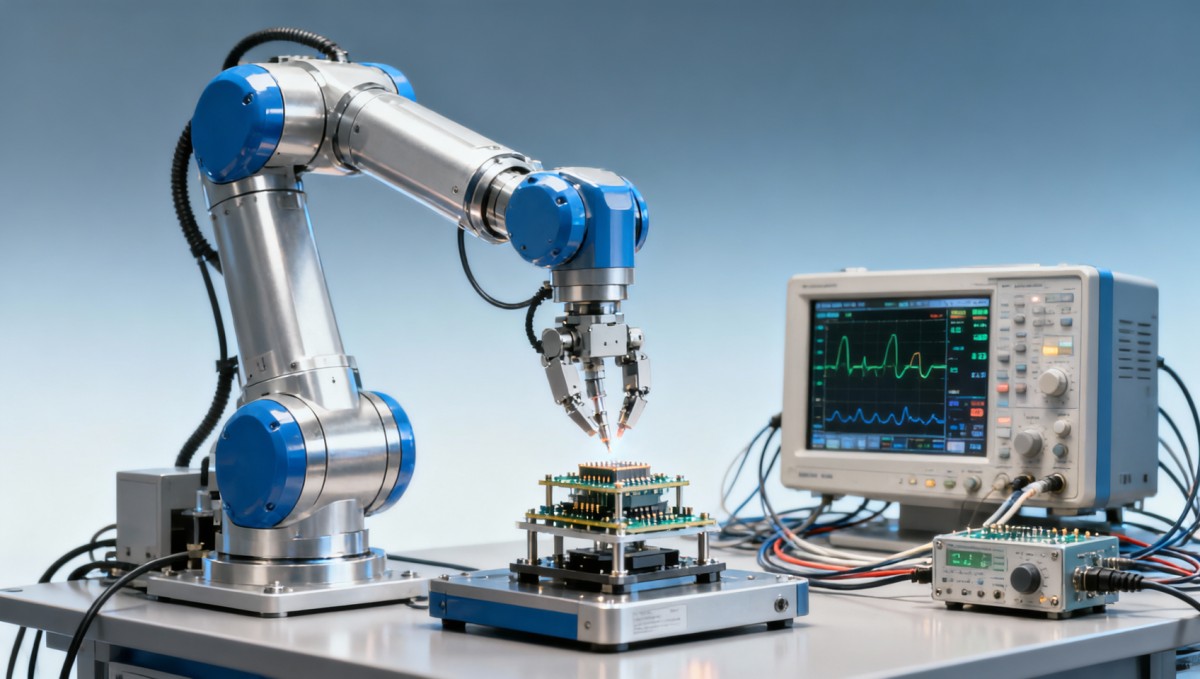

That is why independent benchmarking matters. VitalSync Metrics (VSM) approaches surgical robot latency in the same engineering-first way it evaluates signal quality, electromagnetic compatibility, and compliance readiness across the MedTech supply chain. The objective is simple: translate technical performance into procurement-grade evidence that supports safer adoption, more reliable comparisons, and better long-term sourcing decisions.

Why latency starts to change surgical robot control feel

In robotic surgery, total system latency usually combines several delay sources: input capture, signal processing, communication transport, actuator response, visualization delay, and software safety checks. In many practical setups, the combined end-to-end delay falls somewhere between 20 ms and 150 ms. Operators may tolerate some delay, but they do not perceive all latency bands the same way.

As a practical engineering rule, control feel often remains broadly acceptable below about 50 ms in direct manipulation tasks, especially where motion scaling and stable visualization are well tuned. Between 50 ms and 80 ms, experienced users may begin to notice a softer or slightly disconnected response. Once latency moves above 100 ms, the delay is more likely to influence trajectory correction, clutch timing, and confidence during delicate maneuvers.

The exact threshold depends on task type. Needle targeting, micro-dissection, and tremor-sensitive movement tend to reveal delay earlier than gross positioning. A 70 ms delay may be manageable for arm repositioning but problematic for tasks requiring 1–2 mm correction windows. This is why a single “average latency” value can be misleading if it is not paired with task-specific test conditions.

Another factor is consistency. Operators often adapt better to a stable 60 ms delay than to a system fluctuating between 35 ms and 95 ms. Jitter changes perceived responsiveness from one movement to the next. In procurement reviews, low average latency should therefore be considered together with jitter distribution, frame timing stability, and peak-delay events under load.

Observed latency bands and operator impact

The following matrix summarizes typical engineering interpretations of latency bands in surgical robot testing. These are practical ranges used for evaluation planning rather than universal legal thresholds, and they should always be validated against the intended clinical workflow.

The main takeaway is that latency begins to affect control feel well before catastrophic performance failure occurs. Buyers and engineers should not wait for obvious instability. A shift from 45 ms to 75 ms may already alter operator preference, training burden, and procedural efficiency, especially in systems marketed for precision-led interventions.

What users tend to notice first

- A slight lag between hand input and tool response during fine directional changes.

- Overcorrection when attempting sub-3 mm adjustments.

- Greater mental effort during visually guided motion in narrow spaces.

- Reduced confidence when switching from gross motion to precision control.

How surgical robot latency should be tested in real conditions

A meaningful surgical robot latency test cannot rely on idle-state measurement alone. The robot should be evaluated under representative operating conditions, including live image rendering, active safety logic, realistic instrument payloads, and repeated motion patterns. Testing only one subsystem, such as actuator response, may understate the delay that surgeons actually experience at the console.

A robust protocol typically includes at least 3 layers of evaluation: subsystem delay, integrated end-to-end latency, and task-based user perception. For engineering validation, 30 to 50 repeated trials per condition can help reveal median response, 95th percentile behavior, and outlier spikes. Procurement teams should ask whether the reported figure is an average, a worst case, or a percentile-based result.

Environmental loading also matters. Video compression, electromagnetic interference, thermal buildup, and software logging can each add milliseconds. A system performing at 42 ms in bench mode may rise to 68 ms when the imaging chain, network stack, and safety monitoring are fully enabled. In a value-based procurement environment, that difference can decide whether the technology supports its intended clinical promise.

From a laboratory perspective, repeatability is as important as speed. If the same setup produces results that vary by 15–20 ms across identical runs, either the measurement method is weak or the system timing is unstable. Both issues should be resolved before comparative supplier scoring is finalized.

Recommended test points for benchmarking

The table below outlines a practical structure for benchmark-oriented latency testing across engineering, clinical usability, and procurement review.

For procurement teams, the most valuable evidence is usually integrated latency plus jitter under realistic load. That combination offers a clearer view of operator experience than isolated component timing alone.

A 5-step evaluation flow

- Define the intended surgical tasks and acceptable correction windows.

- Measure subsystem timing with traceable instrumentation.

- Run integrated tests with full imaging and safety functions enabled.

- Review average, percentile, and worst-case latency values.

- Validate findings with operator trials lasting at least 20–30 minutes.

Why latency cannot be separated from EMC, signal quality, and quality systems

Latency is often discussed as a software or controls issue, but in medical electronics it is also influenced by electromagnetic robustness, sensor fidelity, and design controls. A robotic platform may meet a nominal timing target in a clean lab yet show degraded performance when exposed to electromagnetic noise, cable loading, or device coexistence conditions common in operating rooms.

This is where emc testing for medical electronics becomes commercially relevant. If EMI events trigger retransmissions, filtering overhead, display disturbances, or intermittent control-state resets, the operator may experience irregular delay rather than a steady timing profile. Even a 10–15 ms increase during high-interference events can change the subjective feel of the console.

The same principle applies to signal to noise ratio in patient monitors and other connected clinical systems. Signal quality affects how much filtering and smoothing the device must perform before presenting usable data. In robotic surgery, poor upstream signal quality in imaging or sensing subsystems can increase processing time, reduce confidence in guidance cues, or force conservative control behavior that feels slower than the raw actuator performance suggests.

Quality management also matters. Under iso 13485 audit requirements, organizations are expected to show design traceability, validation discipline, change control, and risk-based verification. If latency-critical software updates are introduced without strong validation records, the procurement risk extends beyond one performance metric. It becomes a lifecycle governance issue affecting maintenance, complaint handling, and post-market confidence.

Cross-functional factors that influence control feel

The following comparison helps buyers see why latency should be reviewed as part of a broader technical integrity picture rather than as a single isolated benchmark.

The practical conclusion is clear: a latency claim is only as credible as the surrounding test discipline. When VSM benchmarks systems, the purpose is not merely to record milliseconds. It is to determine whether those milliseconds remain stable under real-world electronic, operational, and quality-system conditions.

Common procurement mistake

- Accepting a low headline latency without seeing test load conditions.

- Ignoring jitter because only mean values are presented.

- Treating EMC and quality audits as separate from usability outcomes.

Procurement criteria for hospitals, MedTech teams, and laboratory architects

For procurement directors and enterprise decision-makers, the key question is not simply whether a surgical robot works. It is whether the system’s measured latency, compliance profile, and serviceability support safe, repeatable performance over its full lifecycle. Capital purchasing decisions often span 5–10 years, so weak technical verification at the start can create expensive operational constraints later.

A sound supplier review should include at least 4 dimensions: performance evidence, compliance readiness, maintainability, and workflow fit. Performance evidence means integrated latency data, jitter distribution, and user-trial findings. Compliance readiness includes design validation, relevant regulatory pathways, and controlled documentation. Maintainability covers software update governance, field service response, and replacement component continuity. Workflow fit considers room integration, operator training hours, and compatibility with existing imaging or IT infrastructure.

Laboratory architects and evaluators should also consider test repeatability during acceptance. If the same system is installed across multiple sites, latency behavior should remain within a defined tolerance band, such as ±10 ms or another clinically justified range. Without such acceptance criteria, site-specific networking or shielding issues may go unnoticed until users begin reporting inconsistent control feel.

For startup MedTech companies, transparent latency documentation can strengthen investor, partner, and customer confidence. A smaller company may not have the scale advantage of an established manufacturer, but it can still differentiate through rigorous, independently reviewable engineering evidence.

A practical supplier comparison framework

The matrix below can be used during tender review, technical due diligence, or pre-clinical platform selection.

This framework helps prevent a common purchasing error: choosing a system with impressive marketing claims but incomplete evidence on controllability, stability, and lifecycle reliability. In surgical robotics, technical truth is the foundation of safe commercial scaling.

Checklist before final approval

- Confirm whether latency figures are measured end-to-end or by subsystem only.

- Review jitter, not just mean response time.

- Request EMC-linked operational performance evidence.

- Verify documentation discipline under ISO 13485 quality processes.

- Define acceptance criteria for site installation and periodic revalidation.

Implementation, ongoing validation, and FAQ for decision-makers

Once a surgical robot is selected, latency management does not end with factory acceptance. Hospitals and developers should plan periodic validation at installation, after software updates, and during major accessory changes. A realistic interval may be every 6–12 months, with additional checks after network reconfiguration, imaging subsystem replacement, or any control software revision that affects timing paths.

Operational teams should also create escalation triggers. For example, if measured latency rises by more than 15 ms from baseline, or if jitter expands beyond a pre-approved threshold during 95th percentile review, the system should enter a deeper engineering investigation. This reduces the chance that subtle degradation will accumulate until it affects clinical workflow.

Independent benchmarking adds value here because it supports a neutral reference point between supplier claims and user perception. For organizations procuring across multiple product categories, the same evidence mindset used for surgical robots can strengthen reviews of patient monitors, wearables, implants, and laboratory systems. That continuity is especially important in procurement environments where technical, regulatory, and financial teams all need a common decision language.

VSM’s role in that process is to turn engineering detail into sourcing confidence. By examining measurable parameters rather than promotional positioning, decision-makers can prioritize devices that remain reliable, testable, and supportable long after the initial purchase cycle.

FAQ: how buyers and operators usually frame the issue

How much latency is acceptable in a surgical robot?

There is no single universal number, because acceptable latency depends on task precision, visual feedback design, motion scaling, and user training. In many practical evaluations, systems below 50 ms feel responsive for a wide range of tasks, while systems above 80–100 ms demand closer scrutiny for precision applications.

Why is jitter as important as average delay?

Average delay can hide unstable timing behavior. A robot averaging 55 ms but fluctuating by 30 ms may feel less controllable than a robot holding a stable 65 ms. Users often adapt to consistency more easily than to unpredictable response variation.

What should procurement teams ask suppliers to provide?

Ask for end-to-end latency test conditions, repeated-run statistics, percentile results, EMC-related performance observations, and quality documentation showing controlled verification after software or hardware changes. If possible, request third-party or independent benchmark review rather than relying on internal vendor demos alone.

How long does a meaningful validation cycle usually take?

For a focused benchmark, planning and execution may take 2–4 weeks depending on access to the full system, operator availability, and the number of test conditions. Broader compliance-linked reviews can take longer if documentation gaps or environmental test dependencies must be resolved.

Surgical robot latency test results become truly useful when they are interpreted in context: not as isolated milliseconds, but as indicators of control feel, system stability, compliance maturity, and long-term procurement value. For information researchers, operators, buyers, and enterprise leaders, the most reliable decisions come from integrated evidence that connects performance to real clinical use.

If you are evaluating surgical robotics or other MedTech platforms where engineering integrity matters, VitalSync Metrics can help you benchmark critical parameters, compare technical evidence, and reduce uncertainty before procurement or deployment. Contact us to discuss your application, request a tailored evaluation framework, or learn more about data-driven MedTech verification solutions.

- value-based procurement

- MedTech supply chain

- technical integrity

- MedTech supply

- signal-to-noise ratio

- supply chain

- sourcing confidence

- data-driven

- technical verification

- Robotics

- iso 13485 audit requirements

- signal to noise ratio in patient monitors

- surgical robot latency test

- emc testing for medical electronics

Recommended News

- 2026.06.02Smart Street Lighting Cost-Effective Solutions: Controls, LEDs, and ROILydia Vancini (Regulatory Compliance Lead)

- 2026.06.02Stepper Motors Selection Guide: Torque, Step Angle, Drivers, and Load FitDr. Alistair Thorne (Senior Biomedical Engineer)

- 2026.06.02UV Water Sterilizers for Small Facilities: Flow Rate, Dose, and Lamp LifeDr. Alistair Thorne (Senior Biomedical Engineer)

The VitalSync Intelligence Brief

Receive daily deep-dives into MedTech innovations and regulatory shifts.