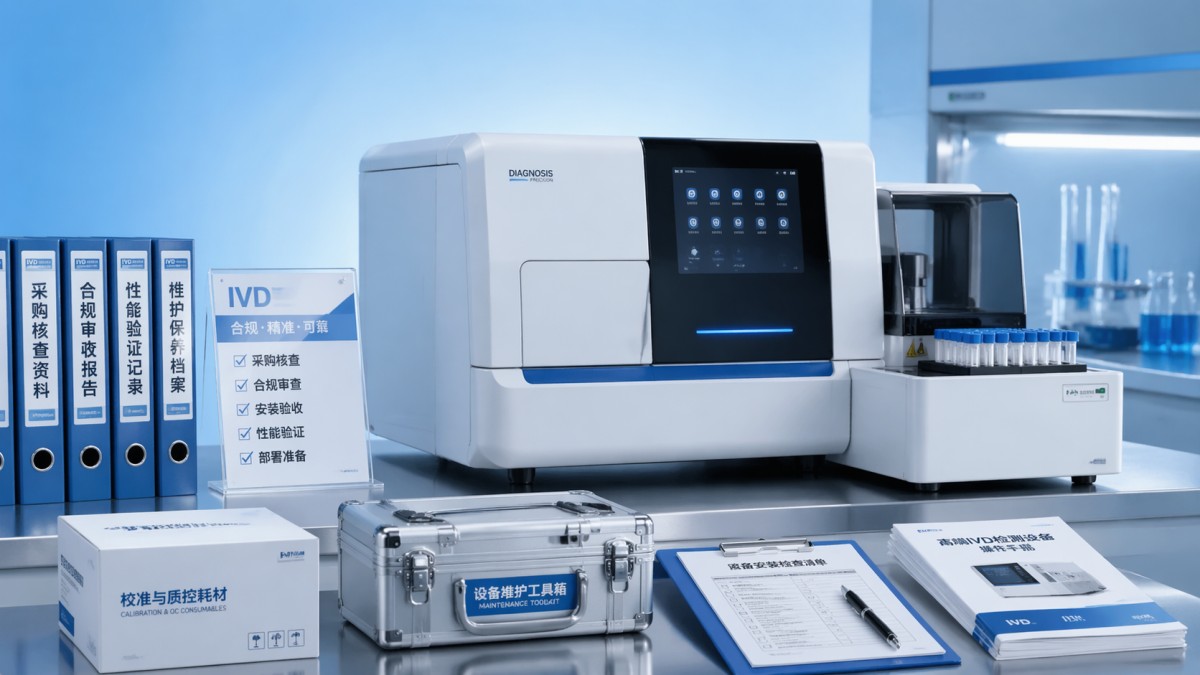

IVD hardware buying checklist for smoother deployment

Buying IVD hardware is no longer just about price or availability—it is about proving performance, compliance, and deployment readiness. For procurement teams, lab operators, and decision-makers, this checklist highlights what matters most: healthcare compliance solutions, medical equipment safety standards, life sciences instrumentation, and long-term medical equipment maintenance. Use it to reduce risk, compare vendors objectively, and achieve smoother deployment with confidence.

What should be checked before you compare IVD hardware quotes?

Many IVD hardware projects slow down before installation because teams compare quotations too early. In practice, the first screening should focus on 4 core layers: intended use, workflow fit, regulatory pathway, and serviceability. If these are unclear, even a competitively priced analyzer, reader, incubator, liquid handling unit, or sample preparation platform can become a deployment risk within the first 30–90 days.

For information researchers and enterprise decision-makers, the central question is not “Which supplier sounds stronger?” but “Which hardware configuration can be verified against our clinical, operational, and compliance requirements?” This is where independent benchmarking matters. VitalSync Metrics (VSM) helps teams translate vendor language into measurable engineering criteria so procurement is based on evidence rather than presentation quality.

For operators and laboratory users, the buying checklist should also cover practical deployment factors. These include bench footprint, ambient operating range, operator training time, consumable handling, calibration frequency, and routine maintenance burden. A system that performs well in a controlled demo may still underperform in a real laboratory running 2 shifts per day or supporting fluctuating sample volumes across weekdays and peak outbreak periods.

A useful pre-quote checklist should confirm whether the project is replacing an existing device, adding capacity, or enabling a new assay menu. Those 3 scenarios drive different decisions on throughput, connectivity, and validation effort. For example, a replacement project may prioritize interface compatibility and short downtime, while a greenfield deployment may require broader infrastructure review across power supply, ventilation, LIS connectivity, and preventive service planning.

The 5 first-pass questions procurement teams should ask

- What clinical or laboratory workflow must the IVD hardware support, and what sample volume range is expected per day, per shift, or per batch?

- Which healthcare compliance solutions are mandatory for the target market, such as MDR/IVDR documentation alignment, electrical safety evidence, and traceability records?

- What installation conditions are required, including temperature range, humidity tolerance, power stability, network access, and physical space?

- How often will calibration, QC checks, preventive maintenance, and operator retraining be needed over a 12-month operating cycle?

- What evidence exists for long-term reliability, component durability, spare parts availability, and field service response times?

If these questions are answered before vendor comparison, selection becomes more objective. It also shortens internal review cycles because technical teams, procurement teams, and leadership can work from the same evaluation framework. In many organizations, this simple alignment step removes 1–2 rounds of unnecessary quotation revision.

Which technical and compliance checkpoints matter most for smoother deployment?

Smooth deployment depends on more than assay capability. IVD hardware selection should examine technical performance together with medical equipment safety standards and post-installation stability. In practical terms, teams should review 6 checkpoint groups: analytical support function, mechanical reliability, software and connectivity, safety compliance, environmental tolerance, and maintenance design. Missing even 1 group can delay validation or increase operating cost during the first 6–12 months.

Regulatory and quality documentation should be reviewed early, not after commercial negotiation. Depending on target geography and device classification, teams commonly need technical files, risk management evidence, labeling clarity, traceability records, and documented change control. References to MDR/IVDR, IEC-related electrical safety frameworks, and general quality management alignment are especially relevant when the hardware will be installed in hospitals, clinical labs, or regulated manufacturing environments.

Performance evidence should also be separated into “demo-level” and “deployment-level” proof. Demo-level proof often shows a narrow best-case scenario. Deployment-level proof examines repeatability, warm-up behavior, error recovery, environmental sensitivity, accessory dependence, and uptime support under routine workloads. VSM’s benchmarking approach is valuable here because it frames hardware not as a brochure item, but as a system with measurable engineering behavior.

For life sciences instrumentation, integration requirements are increasingly important. A device may be technically acceptable yet difficult to deploy because it lacks stable data export, user access control, audit trail support, or compatibility with middleware and LIS ecosystems. Procurement teams should request interface details during evaluation, because retrofitting connectivity after installation can extend project timelines by 2–8 weeks.

Key evaluation dimensions for IVD hardware procurement

The table below converts technical and compliance requirements into an evaluation structure that procurement, engineering, and lab operations teams can review together. It is especially useful when multiple vendors appear similar on headline specifications but differ in deployment readiness.

This evaluation structure helps separate “feature-rich” from “deployment-ready.” It also improves negotiation quality because the discussion shifts from generic claims to documented readiness in service, compliance, and workflow performance.

A practical compliance review sequence

- Confirm intended use and target market requirements before discussing shipment dates.

- Review documentation completeness, including safety, traceability, and revision control records.

- Check whether installation, validation, and operator training are included in the supplier scope.

- Verify maintenance planning, spare part access, and escalation route for field failures.

This 4-step sequence is simple, but it prevents a common problem: selecting compliant hardware on paper that arrives without enough operational support for a timely launch.

How do you compare vendors beyond price and brochure claims?

Vendor comparison should not stop at initial capital cost. In B2B healthcare procurement, smoother deployment often comes from choosing the offer with fewer hidden burdens across installation, validation, training, maintenance, and documentation. A lower upfront price can become more expensive if it adds 2 extra site visits, longer operator onboarding, or repeated service interruptions during the first year.

A strong comparison method uses 3 scoring layers. First, review hard requirements such as compliance, footprint, and compatibility. Second, compare operating value such as throughput fit, maintenance effort, and consumable logistics. Third, score implementation confidence, including supplier response quality, training completeness, and escalation transparency. This approach works better than a single weighted price model because it reflects real deployment risk.

Independent technical review is especially valuable when vendors use inconsistent terminology. One supplier may describe a system as low-maintenance, another as field-optimized, and a third as robust for routine use. Without standardized benchmarking, those phrases are difficult to compare. VSM supports procurement teams by turning vendor statements into measurable criteria and standardized whitepaper-style assessments that can be reviewed across functions.

For decision-makers managing multiple sites, the comparison should also include scalability. A single-site purchase and a 5-site rollout are not the same project. Multi-site deployment requires stronger control over documentation consistency, installation repeatability, training format, and spare parts planning. If rollout is phased over 2–4 quarters, even small differences in vendor discipline can become material.

Sample vendor comparison matrix for procurement review

The following table can be used during shortlist evaluation. It compares suppliers on practical deployment factors rather than purely commercial language, helping teams identify the option with lower operational friction.

A matrix like this makes hidden differences visible. It also helps explain procurement choices to finance and executive teams, because the final decision is linked to deployment outcomes, not just unit price.

Common comparison mistakes

- Treating all service contracts as equivalent without checking response windows, spare part scope, and preventive visit frequency.

- Ignoring operator workload, especially when daily QC, cleaning, or calibration steps exceed realistic staffing levels.

- Assuming compliance documents can be supplied later, even when internal approval requires them before purchase order release.

- Comparing peak throughput only, without reviewing actual routine throughput under mixed sample loads.

These mistakes are common because brochures emphasize capabilities, while deployment success depends on constraints. A disciplined comparison process keeps both in view.

What does a deployment-ready procurement checklist look like in practice?

A deployment-ready checklist should connect pre-purchase review with post-delivery execution. That means the same document should guide specification confirmation, factory or documentation review, site preparation, acceptance testing, and early maintenance planning. When these steps are separated across teams, projects often lose 1–3 weeks through duplicated review and unresolved handoff questions.

For hospital groups, laboratories, and MedTech startups, the most effective checklist usually includes 6 sections: application fit, technical verification, compliance readiness, installation conditions, service model, and commercial terms. Each section should identify pass/fail items, supporting evidence, responsible owner, and deadline. This is far more useful than an unstructured request for quotation template.

Medical equipment maintenance should be part of buying decisions, not an afterthought. Teams should ask how many wear components are operator-replaceable, what typical preventive service intervals look like, whether remote diagnostics are available, and how long common spare part replenishment may take. Even a standard 5-day shipping delay can have serious impact if there is no backup device in the workflow.

Deployment planning should also define acceptance criteria in advance. Instead of relying on a general statement that the unit is installed, organizations should specify 4–6 acceptance points such as power-on stability, calibration completion, interface testing, operator training completion, safety check review, and first controlled run under site conditions. This reduces disagreement at handover.

A practical 6-point buying checklist

- Define the use case clearly: replacement, expansion, or new assay capability.

- Confirm healthcare compliance solutions required for the target region and facility type.

- Review technical performance under realistic workload, not only ideal demonstration conditions.

- Validate site readiness, including utilities, connectivity, environmental range, and workflow placement.

- Check maintenance model, service intervals, and spare parts support for at least the first 12 months.

- Set written acceptance criteria before shipment and confirm who signs off each step.

This kind of checklist supports all four audience groups. Researchers gain a framework for vendor screening, operators gain practical usability safeguards, procurement gains measurable comparison points, and enterprise leaders gain better visibility into lifecycle risk.

Typical deployment timeline to plan around

A routine IVD hardware project often moves through 4 phases: specification and vendor review, compliance and documentation review, site preparation and delivery, then installation and acceptance. Depending on complexity, this may span 4–12 weeks. Integrated sites with IT validation or multi-department signoff may require longer, especially when middleware or network approval is involved.

Planning around these phases allows teams to allocate responsibilities early. It also helps avoid a common deployment failure: the hardware arrives on time, but the site, documents, or users are not ready.

FAQ: what do buyers, operators, and decision-makers ask most often?

The questions below reflect common search and procurement intent around IVD hardware buying, medical equipment safety standards, and deployment planning. They are especially relevant when teams need a repeatable framework instead of vendor-by-vendor guesswork.

How do I know whether an IVD hardware quote is truly complete?

A complete quote should cover more than the base unit. Check for accessories, installation scope, initial consumables, operator training, preventive maintenance terms, spare part assumptions, software or interface components, and compliance-related documentation support. If these items are not clearly listed, the real project cost and timeline may shift after purchase approval.

What are the most overlooked medical equipment maintenance questions?

Buyers often overlook maintenance interval, cleaning burden, calibration frequency, and operator-replaceable parts. Another missed point is fault recovery: how quickly can the system return to routine operation after a common error or minor component failure? Asking these questions before purchase often prevents unplanned downtime later.

Which deployment factors matter most for laboratory operators?

Operators should focus on loading logic, user interface clarity, QC workflow, alarm handling, cleaning steps, and daily startup or shutdown time. A system that saves even 10–15 minutes per shift can create meaningful workflow value over a year, especially in labs with repetitive high-volume routines.

How important is independent benchmarking in supplier selection?

It is highly useful when multiple suppliers present similar claims. Independent benchmarking helps compare technical integrity, regulatory readiness, and long-term reliability using a common evidence standard. For healthcare procurement teams, that reduces subjective judgment and supports better documentation for internal approval.

Why work with VSM when evaluating IVD hardware for procurement and deployment?

VitalSync Metrics (VSM) supports healthcare and life sciences buyers who need engineering clarity before they commit budget, time, and operational capacity. Instead of repeating supplier claims, VSM focuses on technical benchmarking, compliance-aware evaluation, and standardized decision support. That is particularly valuable in markets shaped by MDR/IVDR expectations, digital integration pressure, and value-based procurement scrutiny.

If your team is comparing life sciences instrumentation, reviewing healthcare compliance solutions, or trying to reduce deployment risk, VSM can help structure the process. Typical discussion points include parameter confirmation, procurement checklist design, vendor comparison methodology, maintenance planning, documentation gaps, and rollout readiness for single-site or multi-site projects.

You can also use VSM support when internal teams are aligned commercially but still uncertain technically. That often happens when decision-makers need independent review of service assumptions, installation conditions, interface requirements, or long-term reliability indicators before final approval. A more disciplined review at this stage can save weeks of correction after delivery.

If you are preparing for a purchase, contact VSM to discuss specification review, product selection, delivery timeline planning, compliance requirements, sample or evaluation support, and quotation comparison. The goal is simple: make IVD hardware deployment smoother, more defensible, and better aligned with real-world clinical and laboratory operations.

- EMS

- ESS

- PPE

- life sciences

- EdTech

- procurement

- AR

- Cement

- benchmarking

- healthcare procurement

- value-based procurement

- technical integrity

- medical equipment

- technical benchmarking

- digital integration

- life sciences

- long-term reliability

- technical verification

- healthcare compliance

- IVD Hardware

- medical equipment maintenance

- life sciences instrumentation

- medical equipment safety standards

- healthcare compliance solutions

Recommended News

- 2026.04.24Medical technology cost: where budgets go wrongDr. Alistair Thorne (Senior Biomedical Engineer)

- 2026.04.24Packaging choices that protect sterile instrumentsDr. Julian Rossi (RehabTech Specialist)

- 2026.04.24Mobility assist devices: which option fits bestSarah Jenkins (Laboratory Infrastructure Consultant)

The VitalSync Intelligence Brief

Receive daily deep-dives into MedTech innovations and regulatory shifts.