What makes an endoscope image resolution benchmark useful?

An endoscope image resolution benchmark is useful only when it turns visual claims into measurable proof. For procurement teams, operators, and MedTech decision-makers, it supports medical device assessment, healthcare compliance, and medical equipment standards by revealing whether imaging performance is truly fit for clinical use. In a market that includes wholesale medical endoscopes China suppliers, a rigorous benchmark helps separate marketing language from engineering reality.

In practical terms, the core search intent behind this topic is clear: readers want to know how to judge whether a resolution benchmark is actually reliable, decision-worthy, and relevant to clinical performance. They are not looking for a generic definition of image resolution. They want to understand what makes a benchmark trustworthy, what metrics matter, how test results should be interpreted, and how those results reduce procurement risk, support compliance, and improve user confidence.

For operators, the concern is whether image quality supports accurate visualization in real procedures. For procurement teams, the question is whether benchmark data helps compare suppliers on equal terms. For business decision-makers, the real issue is whether the benchmark reduces technical uncertainty, supports vendor qualification, and protects long-term investment.

What makes a resolution benchmark genuinely useful in endoscope evaluation?

A useful endoscope image resolution benchmark does more than report a high number. It connects image performance to real-world use, standardized testing, and reproducible evidence. If a benchmark cannot help a buyer or evaluator answer “Will this device deliver dependable visualization in actual clinical conditions?” then it has limited value.

In healthcare procurement and technical due diligence, a useful benchmark usually has five defining features:

- Clear test methodology: The benchmark explains how resolution was measured, under what lighting, distance, target pattern, and image settings.

- Reproducibility: Different labs or evaluators should be able to repeat the process and reach comparable results.

- Clinical relevance: The test conditions should reflect real use, not only ideal laboratory scenarios.

- Comparability: Results should allow side-by-side comparison across brands, models, and suppliers.

- Decision value: The output should help procurement, compliance, and engineering teams make a defensible choice.

This is especially important in a market where many products promote HD, Full HD, or “superior imaging” without disclosing how those claims were verified. A benchmark becomes useful only when it converts those claims into measurable and comparable engineering data.

Why do buyers and technical teams care about benchmark quality instead of just resolution claims?

Because headline specifications rarely tell the full story. An endoscope may advertise high pixel count, but usable image resolution depends on the entire imaging chain: sensor quality, optics, illumination, signal processing, distortion control, noise behavior, and display output.

For example, two systems may both claim high-definition imaging, yet differ significantly in:

- Edge sharpness

- Center-to-corner resolution consistency

- Performance under low light

- Image noise at working distance

- Motion-related blur

- Color fidelity and contrast

That is why procurement directors and lab evaluators need benchmarks that reflect actual performance, not brochure language. A weak benchmark can create false confidence. A strong benchmark helps identify whether a product is suitable for intended clinical tasks, supports risk management, and reduces the chance of purchasing equipment that underperforms after deployment.

Which benchmark elements matter most for procurement, compliance, and clinical usability?

The most useful resolution benchmarks are built around the questions stakeholders actually need answered.

For procurement teams, the key issue is standardized comparison. They need to know whether different suppliers were tested using the same protocol, same targets, same working distances, and same reporting rules. Without this, “benchmark data” may be impossible to compare fairly.

For operators and users, the concern is visibility in practice. Resolution should remain meaningful across realistic viewing angles, working distances, and tissue-like conditions. If test charts are used only at ideal alignment and brightness, the benchmark may overstate practical usability.

For compliance and quality teams, traceability matters. They need documented methods, calibration records, controlled conditions, and data integrity. In regulated environments, technical claims must be supported by evidence that can withstand audit, supplier review, or internal validation.

For business decision-makers, the focus is risk and return. A good benchmark should help answer:

- Does this device meet expected medical equipment standards?

- Can we defend this purchase technically and commercially?

- Will performance remain consistent across batches or over time?

- Are we buying real capability or polished marketing?

In this sense, useful benchmarking supports not only medical device assessment but also supplier governance, lifecycle planning, and long-term procurement quality.

How should a good endoscope image resolution benchmark be designed?

A strong benchmark design balances engineering rigor with clinical realism. It should not rely on one isolated metric. Instead, it should combine objective measurement with conditions that reflect intended use.

Useful design principles include:

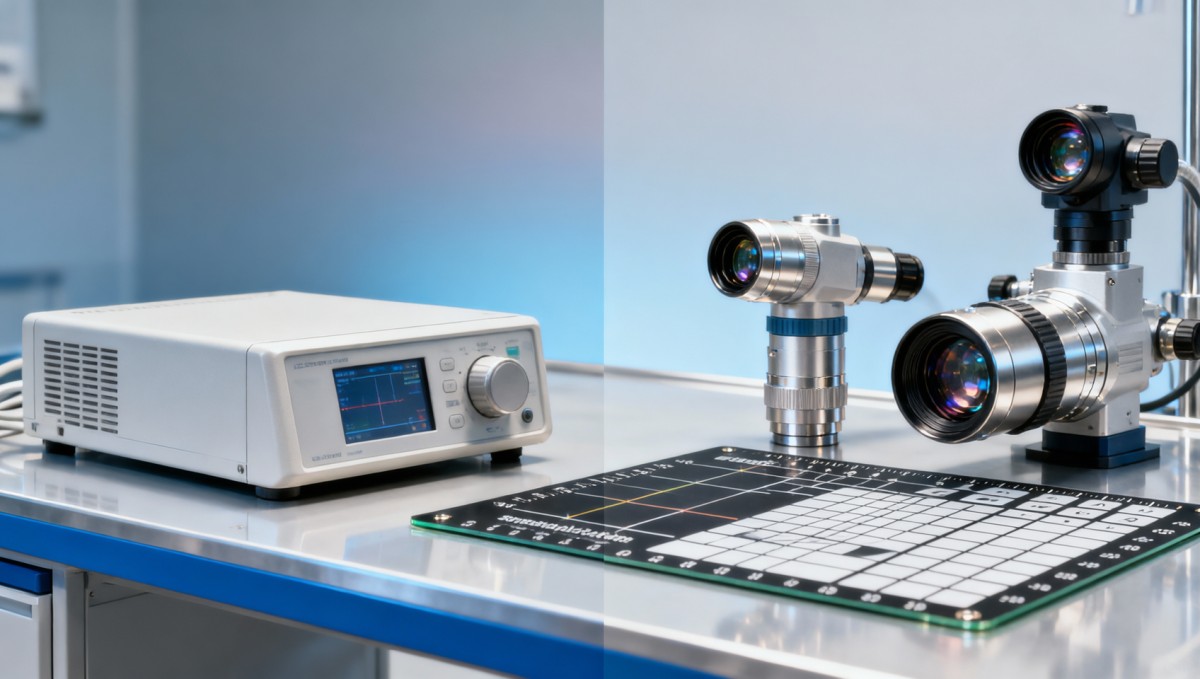

- Standardized test targets: Such as resolution charts that allow consistent line-pair or detail discrimination measurement.

- Defined working distances: Because endoscope performance often changes substantially depending on distance from the target.

- Controlled illumination: Brightness strongly affects perceived and measured image sharpness.

- Optical field analysis: Resolution should be assessed at the center and edges, not only at the best point.

- Noise and contrast evaluation: Sharpness without usable contrast may still fail clinically.

- Repeat testing: Multiple runs help confirm stability rather than one-time best-case results.

- Transparent reporting: Raw conditions and interpretation criteria should be visible to the reader.

Where possible, the benchmark should also distinguish between sensor resolution and effective visual resolution. This distinction matters because digital enhancement or processing may make an image appear sharper without increasing true optical detail capture.

What makes some benchmarks misleading or low-value?

Not all benchmarks are equally informative. Some are too narrow, too idealized, or too vague to support real decisions.

Common warning signs include:

- Resolution results reported without test conditions

- No mention of distance, lighting, or lens configuration

- Only peak performance shown, with no consistency data

- No explanation of whether the image was digitally processed

- No comparison framework across competing products

- Use of non-standard internal scoring systems with unclear meaning

These weaknesses matter because they can distort supplier selection. A benchmark that exaggerates performance under ideal conditions may lead to poor real-world outcomes, additional training burden, user dissatisfaction, or replacement costs. For organizations evaluating wholesale medical endoscopes China suppliers or global OEM offerings, this risk is especially relevant when technical documentation quality varies between vendors.

How can readers use benchmark data to make better decisions?

The most valuable benchmark is one that helps the reader act with confidence. That means turning test data into procurement logic, technical validation, or operational guidance.

Readers can use benchmark results more effectively by asking:

- Was the benchmark performed by an independent and technically credible party?

- Are the methods transparent enough to review or repeat?

- Do the test conditions reflect our use case?

- Can we compare this data directly with other shortlisted devices?

- Does the benchmark reveal limitations as well as strengths?

- Does it support compliance, supplier qualification, and internal approval processes?

If the answer to these questions is yes, the benchmark is likely useful beyond marketing. It becomes a practical tool for supplier selection, internal stakeholder alignment, and risk reduction.

For organizations working in value-based procurement, this is the real advantage. A robust benchmark does not simply confirm that an endoscope produces a sharp image. It helps verify whether the device delivers reliable, defensible performance relative to cost, specification, and intended use.

Conclusion: a useful benchmark is one that supports evidence-based buying and real clinical confidence

What makes an endoscope image resolution benchmark useful is not the size of the number it reports, but the quality of the evidence behind it. The best benchmarks are transparent, repeatable, clinically relevant, and comparable across products. They help users understand practical image quality, help procurement teams compare suppliers fairly, and help decision-makers reduce technical and commercial risk.

In today’s healthcare market, where compliance pressure, engineering complexity, and supplier diversity are all increasing, useful benchmarking is no longer optional. It is part of responsible medical device assessment. When done well, it turns image claims into measurable proof and gives buyers, operators, and MedTech leaders a stronger basis for choosing equipment that truly meets clinical and business expectations.

Recommended News

- 2026.04.23Low-Altitude Economy Enters Scale-Up Year: eVTOL Infrastructure & Vital Sign SensingLydia Vancini (Regulatory Compliance Lead)

- 2026.04.23Lianyirong, ABC Shanghai Dongfang Hub Branch Close Two e-NI TransactionsDr. Hideo Tanaka (Imaging Systems Analyst)

- 2026.04.23China’s April 2026 LPR Set at 3.0% (1Y) and 3.5% (5Y+): Implications for Medical Device Export Financing

The VitalSync Intelligence Brief

Receive daily deep-dives into MedTech innovations and regulatory shifts.