Endoscope Image Resolution Benchmark: What Actually Matters

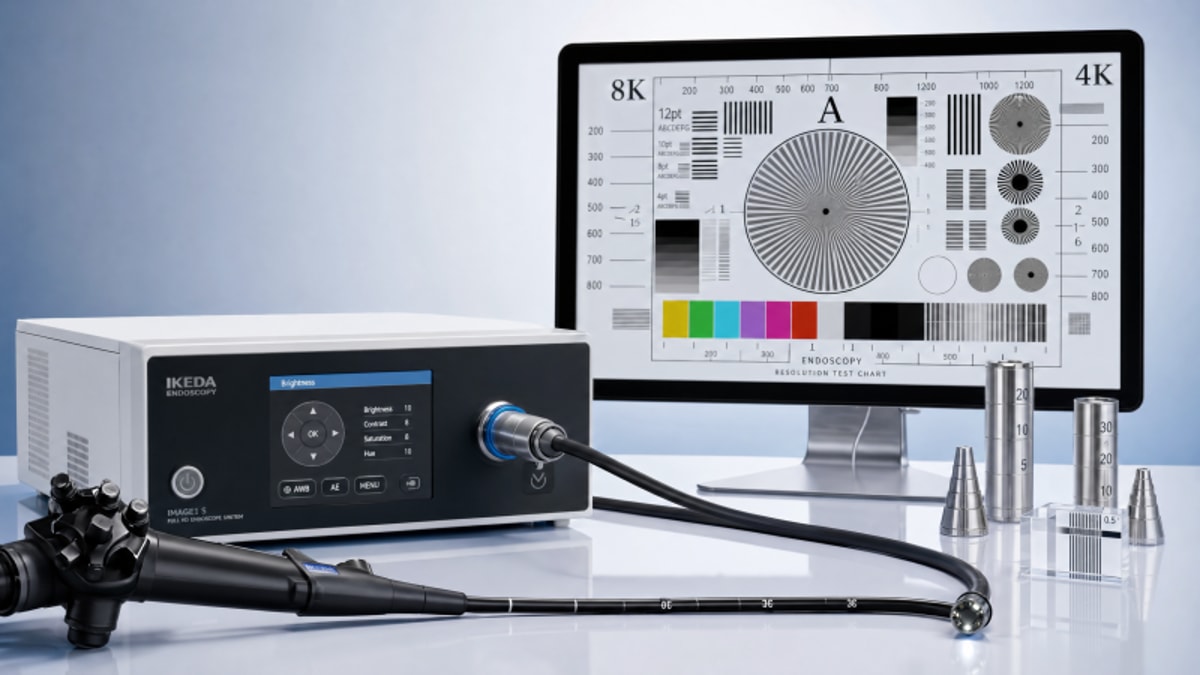

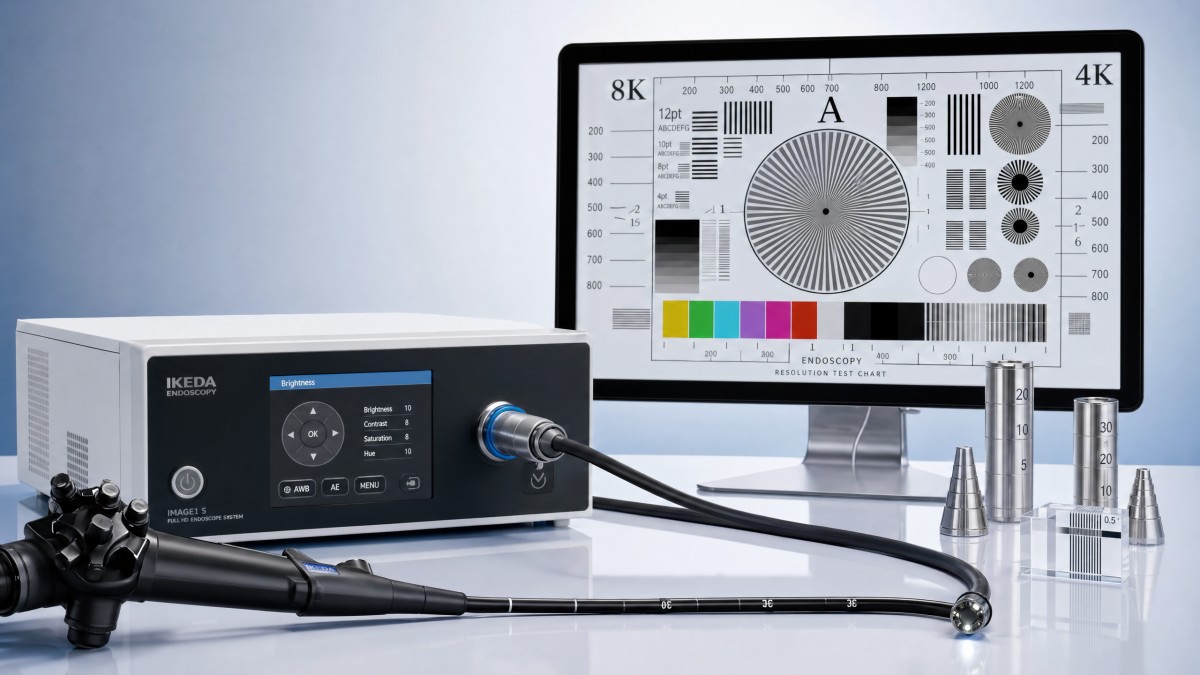

In any endoscope image resolution benchmark, the highest pixel count rarely tells the full story. For technical evaluators, what actually matters is how resolution performs under real clinical conditions—lighting variability, motion, noise control, optical distortion, and system integration. This article examines the engineering metrics behind image quality so procurement and product teams can separate measurable diagnostic value from spec-sheet marketing.

For hospital procurement teams, MedTech developers, and laboratory planners, image resolution is not a cosmetic specification. It directly affects lesion visibility, edge definition, color discrimination, training value, documentation quality, and integration with downstream digital systems. An endoscope image resolution benchmark is therefore most useful when it reflects real use conditions rather than idealized lab screenshots.

This matters even more in a value-based procurement environment, where technical integrity must be demonstrated across the full imaging chain. From sensor design to optics, processing pipeline, display path, and validation method, each component can add or subtract diagnostic clarity. For technical evaluators, the goal is to identify measurable performance thresholds, acceptable trade-offs, and hidden failure points before purchase or product launch.

Why Resolution Alone Is a Weak Procurement Metric

A common mistake in an endoscope image resolution benchmark is to treat nominal resolution as equivalent to usable detail. A 1920×1080 output can look less informative than a lower-format system if optics are soft, noise reduction is too aggressive, or image sharpening creates false edges. In technical evaluation, the question is not how many pixels are present, but how many clinically useful pixels survive the entire imaging path.

Usable resolution is shaped by at least 5 linked variables: sensor sensitivity, lens quality, illumination stability, processing latency, and display reproduction. In low-light cavities or narrow lumens, a system may raise gain to preserve brightness, but that often reduces contrast detail and increases temporal noise. If the benchmark ignores these operating conditions, the resulting score can overstate actual performance in procedures lasting 20–90 minutes.

Another issue is test distance. Some systems produce strong center sharpness at one fixed working distance, such as 20 mm, but degrade quickly at 10 mm or 50 mm. In real examinations, distance shifts constantly. Technical evaluators should therefore avoid single-point claims and request multi-distance measurements, edge-to-center comparisons, and repeated tests under at least 3 illumination levels.

What distorts the interpretation of pixel count

Marketing literature often promotes 4K, HD, or sensor megapixels as primary proof of superiority. Yet if the modulation transfer function drops sharply above mid-spatial frequencies, the extra pixels contribute little to perceivable anatomy. Likewise, aggressive compression for recording or network transfer may remove fine textures that mattered at the acquisition stage.

A practical benchmark should separate capture resolution, displayed resolution, and stored resolution. These 3 stages do not always match. In some integrated systems, the sensor captures more detail than the processor can preserve at 30 fps, while exported video is compressed again for archive compatibility. That discrepancy matters for audits, training libraries, and remote review workflows.

Core questions for evaluators

- At what working distances does the system maintain stable center and edge sharpness?

- How does measured detail change under low, medium, and high illumination conditions?

- What is the image degradation at 30 fps versus 60 fps during motion-heavy use?

- Does post-processing improve visibility or merely increase visual harshness?

The table below summarizes why raw pixel count should be treated as one input, not a final decision criterion, in any endoscope image resolution benchmark.

The main conclusion is straightforward: resolution claims become decision-grade only when tied to viewing conditions, optical behavior, and processing stability. That is why a disciplined endoscope image resolution benchmark must be broader than a single number on a brochure.

The Engineering Metrics That Actually Predict Image Quality

A strong endoscope image resolution benchmark should evaluate at least 6 technical dimensions. First is spatial resolution, often measured using test charts at defined distances such as 10 mm, 20 mm, and 50 mm. Second is contrast transfer, which determines whether low-contrast tissue boundaries remain visible. Third is signal-to-noise behavior, especially under reduced illumination where gain compensation becomes active.

Fourth is geometric distortion. Barrel or pincushion distortion may seem acceptable in general viewing, but it can affect scale perception near the field edge. Fifth is color accuracy and white-balance stability, both essential when subtle hue differences guide interpretation. Sixth is temporal fidelity, including frame stability, motion blur, and end-to-end latency. In practical systems, even a 100–150 ms delay can be relevant for precision handling or image-guided manipulation.

Technical evaluators should also distinguish between objective and perceptual metrics. Objective results such as MTF50, noise variance, and distortion percentage provide consistency. Perceptual review, however, reveals whether denoising smears micro-textures or whether edge enhancement creates halos. The best benchmark combines both approaches in a repeatable protocol.

Recommended core metric set

If testing resources are limited, a practical minimum dataset can still support meaningful procurement decisions. At a minimum, evaluators should document 4 image states: bright static scene, low-light static scene, bright motion scene, and low-light motion scene. This 4-state model exposes many hidden weaknesses that a single chart image cannot reveal.

- Spatial detail at 3 working distances and at center plus edge positions.

- Noise behavior at 2–3 illumination levels, including gain activation points.

- Color consistency before and after white-balance changes.

- Latency and motion artifact evaluation during continuous movement.

The following table offers a procurement-oriented view of the most useful metrics in an endoscope image resolution benchmark.

For technical teams, the benefit of this metric set is comparability. It turns visual impressions into engineering evidence and helps align procurement, quality, R&D, and regulatory stakeholders around the same acceptance logic.

How to Design a Benchmark That Reflects Clinical Reality

A credible endoscope image resolution benchmark should mirror actual use rather than ideal showroom conditions. That means defining a repeatable protocol with controlled variables, but also introducing clinically realistic stressors. At minimum, evaluators should plan tests across 3 brightness states, 2 motion states, and multiple working distances. If the endoscope is intended for narrow channels or reflective surfaces, those scenarios should be included from the start.

The test environment should document light source settings, target type, sensor mode, processing profile, output interface, and display characteristics. A benchmark that changes monitor settings or processing presets between devices is difficult to defend in procurement review. Consistency across the full chain is as important as the measurements themselves.

It is also wise to split testing into laboratory and workflow phases. Laboratory testing creates objective comparability. Workflow testing reveals practical issues such as heat drift, fogging response, white-balance recovery time, and image stability after 30–60 minutes of continuous operation. Many systems look similar in the first 5 minutes and diverge only under sustained use.

A 5-step evaluation workflow

- Define intended use, working distance range, and required frame rate for the target procedure or application.

- Standardize hardware chain, including processor, cable path, monitor, and recording output.

- Run objective chart-based tests under 3 light levels and at least 2 motion conditions.

- Conduct simulated-use review with repeated white-balance, repositioning, and continuous operation.

- Score findings against pre-agreed thresholds for image quality, integration, and serviceability.

Common protocol weaknesses

Several avoidable errors reduce benchmark quality. One is testing only the image center. Another is ignoring the display path, even though a poor monitor can mask true device differences. A third is comparing still images when the clinical workflow is primarily video-based. For many applications, motion fidelity and lag can be as important as static sharpness.

A useful acceptance scorecard should include both pass/fail criteria and weighted criteria. For example, a procurement team may decide that latency above a defined threshold is non-negotiable, while edge sharpness loss of 10–15% may be acceptable if low-light noise remains controlled. The weighting should reflect intended use, not generic vendor rankings.

This structured approach is particularly relevant for organizations that must justify supplier selection under audit pressure. Independent benchmarking frameworks, such as those used by technically focused groups like VitalSync Metrics, are valuable because they convert scattered performance claims into consistent engineering documentation.

Procurement Red Flags and Selection Criteria for Technical Evaluators

In procurement reviews, image quality should never be isolated from lifecycle questions. An endoscope image resolution benchmark may identify strong initial performance, but the final decision must also examine repeatability, maintainability, compatibility, and compliance readiness. Technical evaluators should ask whether image output remains stable after service events, cable changes, or software updates.

One red flag is a specification package that highlights output resolution but omits noise behavior, distortion profile, or latency range. Another is the absence of test conditions. If a vendor cannot state the working distance, illumination level, or frame rate used to generate benchmark images, comparison becomes weak. For regulated environments, incomplete technical traceability can slow evaluation and raise downstream validation work.

Selection criteria should therefore connect imaging quality to procurement practicality. Service intervals, spare availability, software support windows, and accessory compatibility can all affect total cost of ownership over 3–7 years. A system with slightly lower headline resolution may still be the better choice if it delivers stable reproducibility, lower integration risk, and faster validation.

Decision factors beyond image sharpness

Technical evaluators often benefit from scoring devices across four domains: image performance, system integration, compliance documentation, and service support. This prevents a narrow buying decision based on visually attractive demo footage. It also aligns engineering review with procurement governance and long-term operational risk.

The table below can be used as a practical selection framework during supplier comparison or pre-award technical review.

The key takeaway is that procurement-grade benchmarking must support a defendable decision, not just a visually impressive demo. That is where independent technical assessment creates measurable value for healthcare supply chain stakeholders.

FAQ for Teams Running an Endoscope Image Resolution Benchmark

How many test conditions are usually enough for a useful benchmark?

A practical minimum is 4 to 6 conditions: bright static, low-light static, bright motion, low-light motion, and at least 2 working distances. If the intended workflow includes rapid repositioning or reflective tissue environments, add those conditions as separate checks. Fewer than 4 states often misses the main failure modes.

Should evaluators prioritize 4K systems over HD systems?

Not automatically. A higher output format only helps when optics, sensor quality, processing bandwidth, and display infrastructure preserve the extra information. In some use cases, a well-optimized HD system with lower noise and better motion control can outperform a nominally higher-resolution alternative in diagnostic usability.

What is the most overlooked factor in image quality comparison?

Low-light behavior is frequently underestimated. Systems that appear equivalent under bright test lighting can separate quickly once gain rises. Noise texture, color instability, and detail smearing often become visible only under these stressed conditions, which is why low-light testing should be mandatory.

How often should benchmark data be refreshed during procurement or development?

Refresh testing whenever there is a meaningful change in sensor, optics, firmware, processor, or display chain. For ongoing procurement frameworks, an annual review cycle or revalidation during major software revisions is a reasonable baseline. This helps ensure that accepted performance still reflects the shipped configuration.

A robust endoscope image resolution benchmark is less about chasing the highest number and more about proving reliable image performance under realistic conditions. Technical evaluators should focus on retained detail, low-light behavior, distortion control, motion fidelity, and system-level repeatability. When those factors are documented in a structured benchmark, procurement teams gain a stronger basis for supplier comparison, risk reduction, and long-term value.

For organizations that need evidence-based benchmarking rather than marketing language, VitalSync Metrics supports technical due diligence through standardized engineering review and decision-ready documentation. To discuss a tailored benchmark framework, compare imaging systems, or refine your procurement criteria, contact us to get a customized evaluation plan and explore more healthcare technology assessment solutions.

Recommended News

- 2026.04.29Healthcare Analytics Metrics That Support Smarter Budget CallsLydia Vancini (Regulatory Compliance Lead)

- 2026.04.29Mobility Assist Selection Mistakes That Limit Long-Term UseSarah Jenkins (Laboratory Infrastructure Consultant)

- 2026.04.29Endoscope Image Resolution Benchmark for Vendor ComparisonDr. Alistair Thorne (Senior Biomedical Engineer)

The VitalSync Intelligence Brief

Receive daily deep-dives into MedTech innovations and regulatory shifts.